A news item from Maynooth University led me to a paper published in Nature with the title Investigating the replicability of the social and behavioural sciences, co-authored by Dermot Lynott, a colleague from the Psychology Department. The abstract reads:

Pursuing replicability — independent evidence for previous claims — is important for creating generalizable knowledge1,2. Here we attempted replications of 274 claims of positive results from 164 quantitative papers published from 2009 to 2018 in 54 journals in the social and behavioural sciences. Replications were high powered on average to detect the original effect size (median of 99.6%), used original materials when relevant and available, and were peer reviewed in advance through a standardized internal protocol. Replications showed statistically significant results in the original pattern for 151 of 274 claims (55.1% (95% confidence interval (CI) 49.2–60.9%)) and for 80.8 of 164 papers (49.3% (95% CI 43.8–54.7%)), weighed for replicating multiple claims per paper. We observed modest variation in replication rates across disciplines (42.5–63.1%), although some estimates had high uncertainty. The median Pearson’s r effect size was 0.25 (95% CI 0.21–0.27) for original studies and 0.10 (95% CI 0.09–0.13) for replication studies, an 82.4% (95% CI 67.8–88.2%) reduction in shared variance. Thirteen methods for evaluating replication success provided estimates ranging from 28.6% to 74.8% (median of 49.3%). Some decline in effect size and significance is expected based on power to detect original effects and regression to the mean because we replicated only positive results. We observe that challenges for replicability extend across social–behavioural sciences, illustrating the importance of identifying conditions that promote or inhibit replicability3,4.

The outcome of this study is that only about half of claimed positive results in such areas as psychology, economics and education were found to be reproducible. The only thing that surpises me about the result is that it is as high as 50%. And before you get snarky about “soft sciences”, there is a similar phenomenon in physics and astronomy too. Physicist John Bahcall famously said that, based on his experience, “about half of all 3σ detections are false”.

I think at least some of the irreproducible results stem from inappropriate statistical reasoning and/or the incorrect interpretation of statistical evidence. I’ve published a number of examples of such things on this blog (e.g. here and here). I also wrote a book some years ago trying to explain the centrality of statistical reasoning to science generally (though from a perspective based on my background in cosmology). I thought I would rehash and publish here some paragraphs from the end of that book that touch on public trust in science. I was fairly optimistic then, but things are undoubtedly far worse now. We’re seeing widespread cuts to research funding in the United States, the UK and many other countries.

–0–

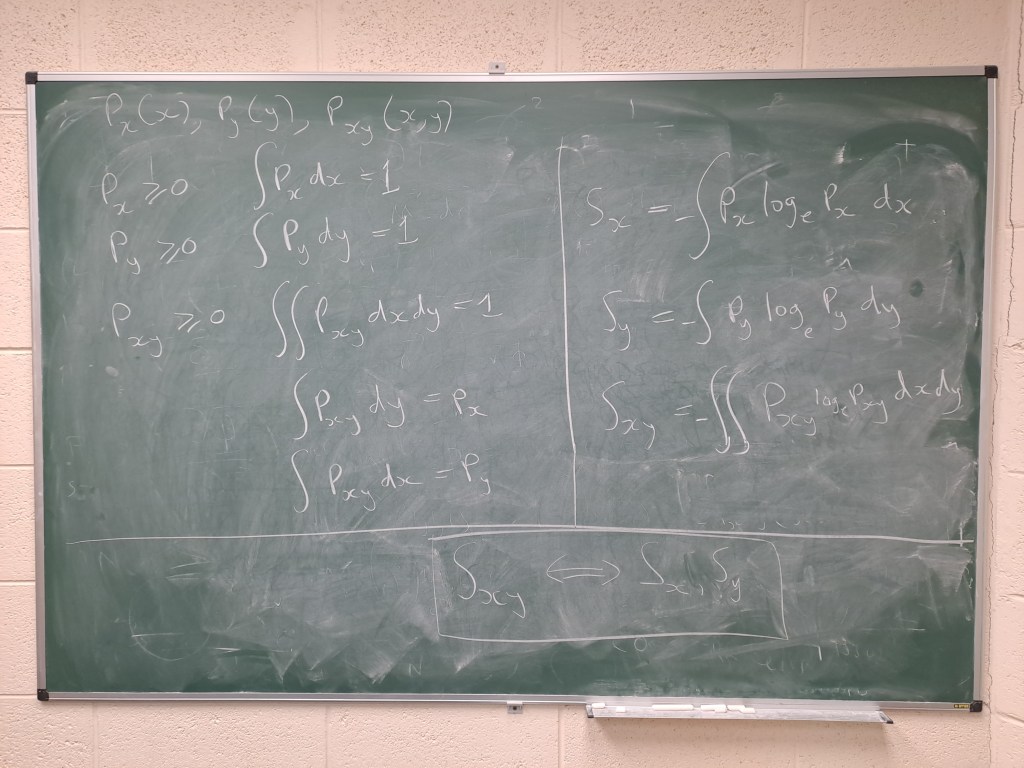

Anyway, I thought I’d take the opportunity to re-iterate why I statistics and statistical reasoning are so important to science. In fact, I think they lie at the very core of the scientific method, although I am still surprised how few practising scientists are comfortable with even basic statistical language. A more important problem is the popular impression that science is about facts and absolute truths. It isn’t. It’s a process. In order to advance it has to question itself. Getting this message wrong – whether by error or on purpose -is immensely dangerous.

Statistical reasoning also applies to many facets of everyday life, including business, commerce, transport, the media, and politics. Probability even plays a role in personal relationships, though mostly at a subconscious level. It is a feature of everyday life that science and technology are deeply embedded in every aspect of what we do each day. Science has given us greater levels of comfort, better health care, and a plethora of labour-saving devices. It has also given us unprecedented ability to destroy the environment and each other, whether through accident or design.

Civilized societies face rigorous challenges in this century. We must confront the threat of climate change and forthcoming energy crises. We must find better ways of resolving conflicts peacefully lest nuclear or conventional weapons lead us to global catastrophe. We must stop large-scale pollution or systematic destruction of the biosphere that nurtures us. And we must do all of these things without abandoning the many positive things that science has brought us. Abandoning science and rationality by retreating into religious or political fundamentalism would be a catastrophe for humanity.

Unfortunately, recent decades have seen a wholesale breakdown of trust between scientists and the public at large. This is due partly to the deliberate abuse of science for immoral purposes, and partly to the sheer carelessness with which various agencies have exploited scientific discoveries without proper evaluation of the risks involved. The abuse of statistical arguments have undoubtedly contributed to the suspicion with which many individuals view science.

There is an increasing alienation between scientists and the general public. Many fewer students enrol for courses in physics and chemistry than a a few decades ago. Fewer graduates mean fewer qualified science teachers in schools. This is a vicious cycle that threatens our future. It must be broken.

The danger is that the decreasing level of understanding of science in society means that knowledge (as well as its consequent power) becomes concentrated in the minds of a few individuals. This could have dire consequences for the future of our democracy.Very few politicians are scientifically literate. How can we expect to control the application of science when the necessary understanding rests with an unelected “priesthood” that is hardly understood by, or represented in, our democratic institutions?

Very few journalists or television producers know enough about science to report sensibly on the latest discoveries or controversies. As a result, important matters that the public needs to know about do not appear at all in the media, or if they do it is in such a garbled fashion that they do more harm than good.

Years ago I used to listen to radio interviews with scientists on the Today programme on BBC Radio 4. I even did such an interview once. It is a deeply frustrating experience. The scientist usually starts by explaining what the discovery is about in the way a scientist should, with careful statements of what is assumed, how the data is interpreted, and what other possible interpretations might be and the likely sources of error. The interviewer then loses patience and asks for a yes or no answer. The scientist tries to continue, but is badgered. Either the interview ends as a row, or the scientist ends up stating a grossly oversimplified version of the story.

Some scientists offer the oversimplified version at the outset, of course, and these are the ones that contribute to the image of scientists as priests. Such individuals often believe in their theories in exactly the same way that some people believe religiously. Not with the conditional and possibly temporary belief that characterizes the scientific method, but with the unquestioning fervour of an unthinking zealot. This approach may pay off for the individual in the short term, in popular esteem and media recognition – but when it goes wrong it is science as a whole that suffers. When a result that has been proclaimed certain is later shown to be false, the result is widespread disillusionment.

The worst example of this tendency that I can think of is the constant use of the phrase “Mind of God” by theoretical physicists to describe fundamental theories. This is not only meaningless but also damaging. As scientists we should know better than to use it. Our theories do not represent absolute truths: they are just the best we can do with the available data and the limited powers of the human mind. We believe in our theories, but only to the extent that we need to accept working hypotheses in order to make progress. Our approach is pragmatic rather than idealistic. We should be humble and avoid making extravagant claims that can’t be justified either theoretically or experimentally.

The more that people get used to the image of “scientist as priest” the more dissatisfied they are with real science. Most of the questions asked of scientists simply can’t be answered with “yes” or “no”. This leaves many with the impression that science is very vague and subjective. The public also tend to lose faith in science when it is unable to come up with quick answers. Science is a process, a way of looking at problems not a list of ready-made answers to impossible problems. Of course it is sometimes vague, but I think it is vague in a rational way and that’s what makes it worthwhile. It is also the reason why science has led to so many objectively measurable advances in our understanding of the world.

I realise I must sound very gloomy about this, but I do think there are good prospects that the gap between science and society may gradually be healed. The fact that the public distrust scientists leads many of them to question us, which is a very good thing. They should question us and we should be prepared to answer them. If they ask us why, we should be prepared to give reasons. If enough scientists engage in this process then what will emerge is and understanding of the enduring value of science. I don’t just mean through the DVD players and computer games science has given us, but through its cultural impact. It is part of human nature to question our place in the Universe, so science is part of what we are. It gives us purpose. But it also shows us a way of living our lives. With some notabnle exceptions, the scientific community is open, internationally-minded, and imbued with a philosophy of cooperation. It values reason and looks to the future rather than the past. Like anyone else, scientists will always make mistakes, but we can always learn from them. The logic of science may not be infallible, but it’s probably the best logic there is in a world so filled with uncertainty.