Very busy day today, what with one thing and another (and another, and another…) so in lieu of a proper post I thought I’d just post this rather excellent cartoon I saw in last week’s Private Eye…

Follow @telescoperArchive for the The Universe and Stuff Category

Physics Graduation, according to Private Eye

Posted in Education, The Universe and Stuff with tags Graduation, Physics, Private Eye on August 11, 2015 by telescoper(Guest Post) – Hidden Variables: Just a Little Shy?

Posted in The Universe and Stuff with tags Anthony Garrett, Bell's Theorem, Quantum Mechanics, Quantum Weirdness, relativity, Socratic Dialogue on August 3, 2015 by telescoperTime for a lengthy and somewhat provocative guest post on the subject of the interpretation of quantum mechanics!

–o–

Galileo advocated the heliocentric system in a socratic dialogue. Following the lifting of the Copenhagen view that quantum mechanics should not be interpreted, here is a dialogue about a way of looking at it that promotes progress and matches Einstein’s scepticism that God plays dice. It is embarrassing that we can predict properties of the electron to one part in a billion but we cannot predict its motion in an inhomogeneous magnetic field in apparatus nearly 100 years old. It is tragic that nobody is trying to predict it, because the successes of quantum theory in combination with its strangeness and 20th century metaphysics have led us to excuse its shortcomings. The speakers are Neo, a modern physicist who works in a different area, and Nino, a 19th century physicist who went to sleep in 1900 and recently awoke. – Anton Garrett

Nino: The ultra-violet catastrophe – what about that? We were stuck with infinity when we integrated the amount of radiation emitted by an object over all wavelengths.

Neo: The radiation curve fell off at shorter wavelengths. We explained it with something called quantum theory.

Nino: That’s wonderful. Tell me about it.

Neo: I will, but there are some situations in which quantum theory doesn’t predict what will happen deterministically – it predicts only the probabilities of the various outcomes that are possible. For example, here is what we call a Stern-Gerlach apparatus, which generates a spatially inhomogeneous magnetic field.i It is placed in a vacuum and atoms of a certain type are shot through it. The outermost electron in each atom will set off either this detector, labelled ‘A’, or that detector, labelled ‘B.’ All the electrons coming out of detector B (say) have identical quantum description, but if we put them through another Stern-Gerlach apparatus oriented differently then some will set off one of the two detectors associated with it, and some will set off the other.

Nino: Probabilistic prediction is an improvement on my 19th century physics, which couldn’t predict anything at all about the outcome. I presume that physicists in your era are now looking for a theory that predicts what happens each time you put a particle through successive Stern-Gerlach apparatuses.

Neo: Actually we are not. Physicists generally think that quantum theory is the end of the line.

Nino: In that case they’ve been hypnotised by it! If quantum mechanics can’t answer where the next electron will go then we should look beyond it and seek a better theory that can. It would give the probabilities generated by quantum theory as averages, conditioned on not controlling the variables of the new theory more finely than quantum mechanics specifies.

Neo: They are talked of as ‘hidden variables’ today, often hypothetically. But quantum theory is so strange that you can’t actually talk about which detector the atom goes through.

Nino: Nevertheless only one of the detectors goes off. If quantum theory cannot answer which then we should look for a better theory that can. Its variables are manifestly not hidden, for I see their effect very clearly when two systems with identical quantum description behave differently. ‘Hidden variables’ is a loaded name. What you’ve not learned to do is control them. I suggest you call them shy variables.

Neo: Those who say quantum theory is the end of the line argue that the universe is not deterministic – genuinely random.

Nino: It is our theories which are deterministic or not. ‘Random’ is a word that makes our uncertainty about what a system will do sound like the system itself is uncertain. But how could you ever know that?

Neo: Certainly it is problematic to define randomness mathematically. Probability theory is the way to make inference about outcomes when we aren’t certain, and ‘probability’ should mean the same thing in quantum theory as anywhere else. But if you take the hidden variable path then be warned of what we found in the second half of the 20th century. Any hidden variables must be nonlocal.

Nino: How is that?

Neo: Suppose that the result of a measurement of a variable for a particle is determined by the value of a variable that is internal to the particle – a hidden variable. I am being careful not to say that the particle ‘had’ the value of the variable that was measured, which is a stronger statement. The result of the measurement tells us something about the value of its internal variable. Suppose that this particle is correlated with another – if, for example, the pair had zero combined angular momentum when previously they were in contact, and neither has subsequently interacted with anything else. The correlation now tells you something about the internal variable of the second particle. For situations like this a man called Bell derived an inequality; one cannot be more precise because of the generality about how the internal variables govern the outcome of measurements.ii But Bell’s inequality is violated by observations on many pairs of particles (as correctly predicted by quantum mechanics). The only physical assumption was that the result of a measurement on a particle is determined by the value of a variable internal to it – a locality assumption. So a measurement made on one particle alters what would have been seen if a measurement had been made on one of the other particles, which is the definition of nonlocality. Bell put it differently, but that’s the content of it.iii

Nino: Nonlocality is nothing new. It was known as “action at a distance” in Newton’s theory of gravity, several centuries ago.

Neo: But gravitational force falls off as the inverse square of distance. Nonlocal influences in Bell-type experiments are heedless of distance, and this has been confirmed experimentally.iv

Nino: In that case you’ll need a theory in which influence doesn’t decay with distance.

Neo: But if influence doesn’t decay with distance then everything influences everything else. So you can’t consider how a system works in isolation any more – an assumption which physicists depend on.

Nino: We should view the fact that it often is possible to make predictions by treating a system as isolated as a constraint on any nonlocal hidden variable theory. It is a very strong constraint, in fact.

Neo: An important further detail is that, in deriving Bell’s inequality, there has to be a choice of how to set up each apparatus, so that you can choose what question to ask each particle. For example, you can choose the orientation of each apparatus so as to measure any one component of the angular momentum of each particle.

Nino: Then Bell’s analysis can be adapted to verify that two people, who are being interrogated in adjacent rooms from a given list of questions, are in clandestine contact in coordinating their responses, beyond having merely pre-agreed their answers to questions on the list. In that case you have a different channel – if they have sharper ears than their interrogators and can hear through the wall – but the nonlocality follows simply from the data analysis, not the physics of the channel.

Neo: In that situation, although the people being interrogated can communicate between the rooms in a way that is hidden from their interrogators, the interrogators in the two rooms cannot exploit this channel to communicate between each other, because the only way they can infer that communication is going on is by getting together to compare their sets of answers. Correspondingly, you cannot use pre-prepared particle pairs to infer the orientation of one detector by varying the orientation of the second detector and looking at the results of particle measurements at that second detector alone. In fact there are general no-signalling theorems associated with the quantum level of description.v There are also more striking verifications of nonlocality using correlated particle pairs,vi and with trios of correlated particles.vii

Nino: Again you can apply the analysis to test for clandestine contact between persons interrogated in separate rooms. Let me explain why I would always search for the physics of the communication channel between the particles, the hidden variables. In my century we saw that tiny particles suspended in water, just visible under our microscopes, jiggle around. We were spurred to find the reason – the particles were being jostled by smaller ones still, which proved to be the smallest unit you can reach by subdividing matter using chemistry: atoms. Upon the resulting atomic theory you have built quantum mechanics. Since then you haven’t found hidden variables underneath quantum mechanics in nearly 100 years. You suggest they aren’t there to be found but essentially nobody is looking, so that would be a self-fulfilling prophecy. If the non-determinists had been heeded about Brownian motion – and there were some in my time, influenced by philosophers – then the 21st century would still be stuck in the pre-atomic era. If one widget of a production line fails under test but the next widget passes, you wouldn’t say there was no reason; you’d revise your view that the production process was uniform and look for variability in it, so that if you learn how to deal with it you can make consistently good widgets.

Neo: But production lines aren’t based on quantum processes!

Nino: But I’m not wedded to quantum mechanics! I am making a point of logic, not physics. Quantum mechanics has answered some questions that my generation couldn’t and therefore superseded the theories of my time, so why shouldn’t a later generation than yours supersede quantum mechanics and answer questions that you couldn’t? It is scientific suicide for physicists to refuse to ask a question about the physical world, such as what happens next time I put a particle through successive Stern-Gerlach apparatuses. You say you are a physicist but the vocation of physicists is to seek to improve testable prediction. If you censor or anaesthetise yourself, you’ll be stuck up a dead end.

Neo: Not so fast! Nolocality isn’t the only radical thing. The order of measurements in a Bell setup is not Lorentz-invariant, so hidden variables would also have to be acausal – effect preceding cause.

Nino: What does ‘Lorentz-invariant’ mean, please?

Neo: This term came out of the theory that resolved your problems about aether. Electromagnetic radiation has wave properties but does not need a medium (‘aether’) to ‘do the waving’ – it propagates though a vacuum. And its speed relative to an observer is always the same. That matches intuition, because there is no preferred frame that is defined by a medium. But it has a counter-intuitive consequence, that velocities do not add linearly. If a light wave overtakes me at c (lightspeed) then a wave-chasing observer passing me at v still experiences the wave overtaking him at c, although our familiar linear rule for adding velocities predicts (c – v). That rule is actually an approximation, accurate at speeds much less than c, which turns out to be a universal speed limit. For the speed of light to be constant for co-moving observers then, because speed is distance divided by time, space and time must look different to these observers. In fact even the order of events can look different to two co-moving observers! The transformation rule for space and time is named after a man called Lorentz. That not just the speed of light but all physical laws should look the same for observers related by the Lorentz transformation is called the relativity principle. Its consequences were worked out by a man called Einstein. One of them is that mass is an extremely concentrated form of energy. That’s what fuels the sun.

Nino: He was obviously a brilliant physicist!

Neo: Yes, although he would have been shocked by Bell’s theorem.viii He asserted that God did not play diceix – determinism – but he also spoke negatively of nonlocality, as “spooky actions at a distance.” x Acausality would have shocked him even more. The order of measurements on the two particles in a Bell setup can be different for two co-moving observers. So an observer dashing through the laboratory might see the measurements done in reverse order than the experimenter logs. So at the hidden-variable level we cannot say which particle signals to which as a result of the measurements being made, and the hidden variables must be acausal. Acausality is also implied in ‘delayed choice’ experiments, as follows.xi Light – and, remarkably, all matter – has both particle properties (it can pass through a vacuum) and wave properties (diffraction), but only displays one property at a time. Suppose we decide, after a unit of light has passed a pair of Young’s slits, whether to measure the interference pattern – due to its diffractive properties as a wave propagating through both slits – or its position, which would tell us which single slit it traversed. According to quantum mechanics our choice seems to determine whether it traverses one slit or both, even though we made that choice after it had passed through! Acausality means that you would have to know the future in order to predict it, so this is a limit on prediction – confirming the intuition of quantum theorists that you can’t do better.

Nino: That will be so in acausal experimental situations, I accept. I believe the theory of the hidden variables will explain why time, unlike space, passes, and also entail a no-time-paradox condition.

Neo: Today we say that a theory must not admit closed time-like trajectories in space-time.

Nino: But a working hidden-variable theory would still give a reason why the system behaves as it did, even if we can’t access the information needed for prediction in situations inferred to be acausal. You can learn a lot from evolution equations even if you don’t know the initial conditions. And often the predictions of quantum theory are compatible with locality and causality, and in those situations the hidden variables might predict the outcome of a measurement exactly, outdoing quantum theory.

Neo: It also turned out that some elements of the quantum formalism do not correspond to things that can be measured experimentally. That was new in physics and forced physicists to think about interpretation. If differing interpretations give the same testable predictions, how do we choose between them?

Nino: Metaphysics then enters and it may differ among physicists, leading to differing schools of interpretation. But non-physical quantities have entered the equations of a theory before. A potential appears in the equations of Newtonian gravity and electromagnetism, but only differences in potential correspond to something physical.

Neo: That invariance, greatly generalised, lies behind the ‘gauge’ theories of my era. These are descriptions of further fundamental forces, conforming to the relativity principle that physics must look the same to co-moving observers related by the Lorentz transformation. That includes quantum descriptions, of course.xii It turned out that atoms have their positive charge in a nucleus contributing most of the mass of an atom, which is orbited by much lighter negatively charged particles called electrons – different numbers of electrons for different chemical elements. Further forces must exist to hold the positively charged particles in the nucleus together against their mutual electrical repulsion. These further forces are not familiar in everyday life, so they must fall off with distance much faster than the inverse square law of electromagnetism and gravity. Mass ‘feels’ gravity and charge feels electromagnetic forces, and there are analogues of these properties for the intranuclear forces, which are also felt by other more exotic particles not involved in chemistry. We have a unified quantum description of the intranuclear forces combined with electromagnetism that transforms according to the relativity principle, which we call the standard model, but we have not managed to incorporate gravity yet.

Nino: But this is still a quantum theory, still non-deterministic?

Neo: Ultimately, yes. But it gives a fit to experiment that is better than one part in a thousand million for certain properties of the electron – which it does predict deterministically.xiii That is the limit of experimental accuracy in my era, and nobody has found an error anywhere else.

Nino: That’s magnificent, and it says a huge amount for the progress of experimental physics too. But I still see no reason to move from can-do to can’t-do in aiming to outdo quantum theory.

Neo: Let me explain some quantum mechanics.xiv The variables we observe in regular mechanics, such as momentum, correspond to operators in quantum theory. The operators evolve deterministically according to the Hamiltonian of the system; waves are just free-space solutions. When you measure a variable you get one of its eigenvalues, which are real-valued because the operators are Hermitian. Quantum mechanics gives a probability distribution over the eigenspectrum. After the measurement, the system’s quantum description is given by the corresponding eigenfunction. Unless the system was already described by that eigenfunction before the measurement, its quantum description changes. That is a key difference from classical mechanics, in which you can in principle observe a system without disturbing it. Such a change (‘collapse’) makes it impossible to determine simultaneously the values of variables whose operators do not have coincident eigenfunctions – in other words, non-commuting operators. It has even been shown, using commuting subsets of the operators of a system in which members of differing sets do not commute, that simultaneous values of differing variables of a system cannot exist.xv

Nino: Does that result rest on any aspect of quantum theory?

Neo: Yes. Unlike Bell setups, which compare experiment with a locality criterion, neither of which have anything to do with quantum mechanics (it simply predicts what is seen), this further result is founded in quantum mechanics.

Nino: But I’m not committed to quantum mechanics! This result means that the hidden variables aren’t just the values of all the system variables, but comprise something deeper that somehow yields the system variables and is not merely equivalent to the set of them.

Neo: Some people suggest that reality is operator-valued and our perplexities arise because of our obstinate insistence on thinking in – and therefore trying to measure – scalars.

Nino: An operator is fully specified by its eigenvalues and eigenfunctions; it can be assembled as a sum over them, so if an operator is a real thing then they would be real things too. If a building is real, the bricks it is constructed from are real. But I still insist that, like any other physical theory, quantum theory should be regarded as provisional.

Neo: Quantum theory answered questions that earlier physics couldn’t, such as why electrons do not fall into the nucleus of an atom although opposite charges attract. They populate the eigenspectrum of the Hamiltonian for the Coulomb potential, starting at the lowest energy eigenfunction, with not more than two electrons per eigenfunction. When the electrons are disturbed they jump between eigenvalues, so that they cannot fall continuously. This jumping is responsible for atomic spectrum lines, whose vacuum wavelength is inversely proportional to the difference in energy of the eigenvalues. That is why quantum mechanics was accepted. But the difficulty of understanding it led scientists to take a view, championed by a senior physicist at Copenhagen, that quantum mechanics was merely a way of predicting measurements, rather than telling us how things really are.

Nino: That distinction is untestable even in classical mechanics. This is really about motivation. If you don’t believe that things ‘out there’ are real then you’ll have no motivation to think about them. The metaphysics beneath physics supposes that there is order in the world and that humans can comprehend it. Those assumptions were general in Europe when modern physics began. They came from the belief that the physical universe had an intelligent creator who put order in it, and that humans could comprehend this order because they had things in common with the creator (‘in his image’). You don’t need a religious faith to enter physics once it has got going and the patterns are made visible for all to see; but if ever the underlying metaphysics again becomes relevant, as it does when elements of the formalism do not correspond to things ‘out there,’ then such views will count. If you believe there is comprehensible and interesting order in the material universe then you will be more motivated to study it than others who suppose that differentiation is illusion and that all is one, i.e. the monist view held by some other cultures. So, in puzzling why people aren’t looking for those not-so-hidden variables, let me ask: Did the view that nature was underpinned by a divine creator get weaker where quantum theory emerged, in Europe, in the era before the Copenhagen view?

Neo: Religion was weakening during your time, as you surely noticed. That trend did continue.

Nino: I suggest the shift from optimism to defeatism about improving testable prediction is a reflection of that change in metaphysics reaching a tipping point. Culture also affects attitudes; did anything happen that induced pessimism between my era and the birth of quantum mechanics?

Neo: The most terrible war in history to that date took place in Europe. But we have moved on from the Copenhagen ‘interpretation’ which was a refusal of all questions about the formalism. That stance is acceptable provided it is seen as provisional, perhaps while the formalism is developed; but not as the last word. Physicists eventually worked out the standard model for the intranuclear forces in combination with electromagnetism. Bell’s theorem also catalysed further exploration of the weirdness of quantum mechanics, from the 1960s; let me explain. Before a measurement of one of its variables, a system is generally not in an eigenstate of the corresponding operator. This means that its quantum description must be written as a superposition of eigenstates. Although measurement discloses a single eigenvalue, remarkable things can be done by exploiting the superposition. We can gain information about whether the triggering mechanisms of light-activated bombs are good or dud triggers in an experiment in which light might be shone at each, according to a quantum mechanism.xvi (This does involve ‘sacrificing’ some of the working bombs, but without the quantum trick you would be completely stuck, because the bomb is booby-trapped to go off if you try to dismantle the trigger.) Even though we have electronic computers today that do millions of operations per second, many calculations are still too large to be done in feasible lengths of time, such as integer factorisation. We can now conceive of quantum computers that exploit the superposition to do many calculations in one, and drastically speed things up.xvii Communications can be made secure in the sense that eavesdropping cannot be concealed, as it would unavoidably change the quantum state of the communication system. The apparent reality of the superposition in quantum mechanics, together with the non-existence of definite values of variables in some circumstances, mean that it is unclear what in the quantum formalism is physical, and what is our knowledge about the physical – in philosophical language, what is ontological and what is epistemological. Some people even suggest that, ultimately, numbers – or at least information quantified as numbers – are physics.

Nino: That’s a woeful confusion – information about what? As for deeper explanation, when things get weird you either give up on going further – which no scientist should ever do – or you take the weirdness as a clue. Any no-hidden-variables claim must involve assumptions or axioms, because you can’t prove something is impossible without starting from assumptions. So you should expose and question those assumptions (such as locality and causality). Don’t accept any axioms that are intrinsic to quantum theory, because your aim is to go beyond quantum theory.

Neo: Some people, particularly in quantum computing, suggest that when a variable is measured in a situation in which quantum mechanics predicts the result probabilistically, the universe actually splits into many copies, with each of the possible values realised in one copy.xviii We exist in the copy in which the results were as we observed them, but in other universes copies of us saw other results.

Nino: We couldn’t observe the other universes, so this is metaphysics, and more fantastic than Jules Verne! What if the spectrum of possible outcomes includes a continuum of eigenvalues? Furthermore a measurement involves an interaction between the measuring apparatus and the system, so the apparatus and system could be considered as a joint system quantum-mechanically. There would be splitting into many worlds if you treat the system as quantum and the apparatus as classical, but no splitting if you treat them jointly as quantum. Nothing privileges a measuring apparatus, so physicists are free to analyse the situation in these two differing ways – but then they disagree about whether splitting has taken place. That’s inconsistent.

Neo: The two descriptions must be reconciled. As I said, a system left to itself evolves according to the Hamiltonian of the system. When one of its variables is measured, it undergoes an interaction with an apparatus that makes the measurement. The system finishes in an eigenstate of the operator corresponding to the variable measured, while the apparatus flags the corresponding eigenvalue. This scenario has to be reconciled with a joint quantum description of the system and apparatus, evolving according to their joint Hamiltonian including an interaction term. Reconciliation is needed in order to make contact with scalar values and prevent a regress problem, since the apparatus interacts quantum-mechanically with its immediate surroundings, and so on. Some people propose that the regress is truncated at the consciousness of the observer.

Nino: I thought vitalism was discredited once the soul was found to be massless, upon weighing dying creatures! The proposal you mention actually makes the regress problem worse, because if the result of a measurement is communicated to the experimenter via intermediaries who are conscious – who are aware that they pass on the result – then does it count only when it reaches the consciousness of the experimenter (an instant of time that is anyway problematic to define)? If so, why?

Neo: That’s a regress problem on the classical side of the chain, whereas I was talking about a regress problem on the quantum side. This suggests that the regress is terminated where the system is declared to have a classical description.xix I fully share your scepticism about the role of consciousness and free will. Human subjects have tried to mentally influence the outcomes of quantum measurements and it is not accepted that they can alter the distribution from the quantum prediction. Some people even propose that consciousness exists because matter itself is conscious and the brain is so complex that this property is manifest. But they never clarify what it means to say that atoms may have consciousness, even of a primitive sort.

Nino: Please explain how our regress terminates where we declare something classical.

Neo: For any measured eigenvalue of the system there are generally many degrees of freedom in the Hamiltonian of the apparatus, so that the density of states of the apparatus is high. (This is true even if the quantum states are physically large, as in low temperature quantum phenomena such as superconductivity.) Consider the apparatus variable that flags the result of the measurement. In the sum over states giving the expectation value of this variable, cross terms between quantum states of the apparatus corresponding to different eigenvalues of the system are very numerous. These cross terms are not generally correlated in amplitude or phase, so that they average out in the expectation value in accordance with the law of large numbers.xx Even if that is not the case they are usually washed out by interactions with the environment, because you cannot in practice isolate a system perfectly. This is called decoherence,xxi and nonlocality and those striking quantum-computer effects can only be seen when you prevent it.

Nino: Remarkable! But you still have only statistical prediction of which eigenvalue is observed.

Neo: Your deterministic viewpoint has been disparaged by some as an outmoded, clockwork view of the universe.

Nino: Just because I insist on asking where the next particle will go in a Stern-Gerlach apparatus? Determinism is a metaphysical assumption; no more or less. It inspires progress in physics, which any physicist should support. Let me return to nonlocality and acausality (which is a kind of directional nonlocality with respect to time, rather than space). They imply that the physical universe is an indivisible whole at the fundamental level of the hidden variables. That is monist, but is distinct from religious monism because genuine structure exists in the hidden – or rather shy – variables.

Neo: Certainly space and time seem to be irrelevant to the hidden interactions between particles that follow from Bell tests. As I said, we have a successful quantum description of electromagnetic interactions and have combined it with the forces that hold the atomic nucleus together. In this description we regard the electromagnetic field itself as an operator-valued variable, according to the prescription of quantum theory. The next step would be to incorporate gravity. That would not be Newtonian gravity, which cannot be right because, unlike Maxwell’s equations, it only looks the same to co-moving observers who are related by the Galilean transform of space and time – itself only a low-speed approximation to the correct Lorentz transform. Einstein found a theory of gravity that transforms correctly, known as general relativity, and to which Newton’s theory is an approximation. Einstein’s view was that space and time were related to gravity differently than to the other forces, but a theory that is almost equivalent to his (predicting identically in all tests to date) has since emerged that is similar to electromagnetism – a gauge theory in which the field is coupled naturally to matter which is described quantum-mechanically.xxii Unlike electromagnetism, however, the gravitational field itself has not yet been successfully quantised, hindering the marrying of it to other forces so as to unify them all. Of course we demand a theory that takes account of both quantum effects and relativistic gravity, for any theory that neglects quantum effects becomes increasingly inaccurate where these are significant – typically on the small scale inside atoms – while any theory that neglects relativistic gravitational effects becomes increasingly inaccurate where they are significant – typically on large scales where matter is gathered into dense massive astronomical bodies. Not even light can escape from some of these bodies – and, because the speed of light is a universal speed limit, nor can anything else. Quantum and gravitational effects are both large if you look at the universe far enough back in time, because we have learned that the universe was once very small and very dense. So a complete theory is indispensable for cosmologists who seek to study origins. The preferred program for quantum gravity today is known as string theory. But it has a deeply complicated structure and is infeasible to test experimentally, rendering progress slow.

Nino: But it’s still not a complete theory if it’s a quantum theory. Please say more about that very small dense stage of the universe which presumably expanded to give what we see today.

Neo: We believe the early part of the expansion underwent a great boost, known as inflation, which explains how the universe is unexpectedly smooth on the largest scale today and is also not dominated by gravity. Everything in the observed universe was, in effect, enormously diluted. Issues of causality also arise. But the mechanism for inflation is conjectural, and inflation raises other questions.

Nino: Unexpected large-scale smoothness sounds to me like a manifestation of nonlocality. Furthermore the hidden variables are acausal. Perhaps you cannot do without them at such extreme densities and temperatures. Then you wouldn’t need to invoke inflation.

Neo: We believe that inflation took place after the ‘Planck’ era in which a full theory of quantum theory of gravity is indispensible for accuracy. In that case our present understanding is adequate to describe the inflationary epoch.

Nino: You are considering the entire universe, yet you cannot predict which detector goes off next when consecutive particles having identical quantum description are fired through a Stern-Gerlach apparatus. Perhaps you should walk before you run. Then your problems in unifying the fundamental forces and applying the resulting theory to the entire universe might vanish.

Neo: That’s ironic – the older generation exhorting the younger to revolution! To finish, what would you say to my generation of physicists?

Nino: It is magnificent that you can predict properties of the electron to nine decimal places, but that makes it more embarrassing that you cannot tell something as basic as which way a silver atom will pass through an inhomogeneous magnetic field, according to its outermost electron. That incapability should be an itch inside your oyster shell. Seek a theory which predicts the outcome when systems having identical quantum specification behave differently. Regard all strange outworkings of quantum mechanics as information about the hidden variables. Purported no-hidden-variables theorems that are consistent with quantum mechanics must contain extra assumptions or axioms, so put such theorems to work for you by ensuring that your research violates those assumptions. Ponder how to reconcile the success of much prediction upon treating systems as isolated with the nonlocality and acausality visible in Bell tests. Don’t let anything put you off because, barring a lucky experimental anomaly, only seekers find. By doing that you become part of a great project.

Anthony Garrett has a PhD in physics (Cambridge University, 1984) and has held postdoctoral research contracts in the physics departments of Cambridge, Sydney and Glasgow Universities. He is Managing Editor of Scitext Cambridge (www.scitext.com), an editing service for scientific documents.

i Gerlach, W. & Stern, O., “Das magnetische Moment des Silberatoms”, Zeitschrift für Physik 9, 353-355 (1922).

ii Bell, J.S., “On the Einstein Podolsky Rosen paradox”, Physics 1, 195-200 (1964).

iiiGarrett, A.J.M., “Bell’s theorem and Bayes’ theorem”, Foundations of Physics 20, 1475-1512 (1990).

iv The most rigorous test of Bell’s theorem to date is: Giustina, M., Mech, A., Ramelow, S., Wittmann, B., Kofler, J., Beyer, J., Lita, A., Calkins, B., Gerrits, T., Nam, S.-W., Ursin R. & Zeilinger, A., “Bell violation using entangled photons without the fair-sampling assumption”, Nature 497, 227-230 (2013). For a test of the 3-particle case, see: Bouwmeester, D., Pan, J.-W., Daniell, M., Weinfurter, H. & Zeilinger, A., “Observation of three-photon Greenberger-Horne-Zeilinger entanglement”, Physical Review Letters 82, 1345-1349 (1999).

v Bussey, P.J., “Communication and non-communication in Einstein-Rosen experiments”, Physics Letters A123, 1-3 (1987).

viMermin, N.D., “Quantum mysteries refined”, American Journal of Physics 62, 880-887 (1994). This is a very clear tutorial recasting of: Hardy, L., “Nonlocality for two particles without inequalities for almost all entangled states”, Physical Review Letters 71, 1665-1668 (1993).

vii Mermin, N.D., “Quantum mysteries revisited”, American Journal of Physics 58, 731-734 (1990). This is a tutorial recasting of the ‘GHZ’ analysis: Greenberger, D.M., Horne, M.A. & Zeilinger, A., 1989, “Going beyond Bell’s theorem”, in Bell’s Theorem, Quantum Theory and Conceptions of the Universe, ed. M. Kafatos (Kluwer Academic, Dordrecht, Netherlands), p.69-72.

viii Einstein, A., Podolsky, B. & Rosen, N., “Can quantum-mechanical description of physical reality be considered complete?”, Physical Review 47, 777-780 (1935).

ixEinstein, A., Letter to Max Born, 4th December 1926. English translation in: The Born-Einstein Letters 1916-1955 (MacMillan Press, Basingstoke, UK), 2005, p.88.

x Einstein, A., Letter to Max Born, 3rd March 1947. Ibid., p.155.

xiWheeler, J.A., 1978, “The ‘past’ and the ‘delayed-choice’ double-slit experiment”, in Mathematical Foundations of Quantum Theory, ed. A.R. Marlow (Academic Press, New York, USA), p.9-48. Experimental verification: Jacques, V., Wu, E., Grosshans, F., Treusshart, F., Grangier, P., Aspect, A. & Roch, J.-F., “Experimental Realization of Wheeler’s Delayed-Choice Gedanken Experiment”, Science 315, 966-968 (2007).

xii Weinberg, S., 2005, The Quantum Theory of Fields, vols. 1-3 (Cambridge University Press, UK).

Hanneke, D., Fogwell, S. & Gabrielse, G., “New measurement of the electron magnetic moment and the fine-structure constant”, Physical Review Letters 100, 120801 (2008); 4pp.

xiii Dirac, P.A.M., 1958, The Principles of Quantum Mechanics (4th ed., Oxford University Press, UK).

xiv Mermin, N.D., “Simple unified form for the major no-hidden-variables theorems”, Physical Review Letters 65, 3373-3376 (1990); Mermin, N.D., “Hidden variables and the two theorems of John Bell”, Reviews of Modern Physics 65, 803-815 (1993). This is a simpler version of the ‘Kochen-Specker’ analysis: Kochen, S. &

xvSpecker, E.P., “The problem of hidden variables in quantum mechanics”, Journal of Mathematics and Mechanics, 17, 59-87 (1967).

xvi Elitzur, A.C. & Vaidman, L., “Quantum mechanical interaction-free measurements”, Foundations of Physics 23, 987-997 (1993).

xvii Mermin, N.D., 2007, Quantum Computer Science (Cambridge University Press, UK).

xviii DeWitt, B.S. & Graham, N. (eds.), 1973, The Many-Worlds Interpretation of Quantum Mechanics (Princeton University Press, New Jersey, USA). The idea is due to Hugh Everett III, whose work is reproduced in this book.

xixThis immediately resolves the well known Schrödinger’s cat paradox.

xxVan Kampen, N.G., “Ten theorems about quantum mechanical measurements”, Physica A153, 97-113 (1988).

xxi Zurek, W.H., “Decoherence and the transition from quantum to classical”, Physics Today 44, 36-44 (1991).

xxii Lasenby, A., Doran, C. & Gull, S., “Gravity, gauge theories and geometric algebra”, Philosophical Transactions of the Royal Society A356, 487-582 (1998). This paper derives and studies gravity as a gauge theory using the mathematical language of Clifford algebra, which is the extension of complex analysis to higher dimensions than 2. Just as complex analysis is more efficient than vector analysis in 2 dimensions, Clifford algebra is superior to conventional vector/tensor analysis in higher dimensions. (Quaternions are the 3-dimensional version, a generalisation that Nino would doubtless appreciate.) Nobody uses the Roman numeral system any more for long division! This theory of gravity involves two fields that obey first-order differential equations with respect to space and time, in contrast to general relativity in which the metric tensor obeys second-order equations. These gauge fields derive from translational and rotational invariance and can be expressed with reference to a flat background spacetime (i.e., whenever coordinates are needed they can be Cartesian or some convenient transformation thereof). Presumably it is these two gauge fields, rather than the metric tensor, that should satisfy quantum (non-)commutation relations in a quantum theory of gravity.

Half Term Blue Moon

Posted in Biographical, Music, The Universe and Stuff with tags Blue Moon, The Marcels on July 31, 2015 by telescoperTonight’s a Blue Moon, which happens whenever there are two full moons in a calendar month, although the phrase used to mean the third full moon of a season in which there are four in a quarter-year (or season). A Blue Moon isn’t all that rare an occurence actually. In fact there’s one every two or three years on average. But it does at least provide an excuse to post this again…

Incidentally, today marks the half-way mark in my five-year term as Head of the School of Mathematical and Physical Sciences at the University of Sussex. I started on 1st February 2013, so it’s now been exactly two years and six months. It’s all downhill from here!

Follow @telescoperFalisifiability versus Testability in Cosmology

Posted in Bad Statistics, The Universe and Stuff with tags Bayesian Inference, Bayesian probability, Falsifiability, Gubitosi et al., Karl Popper, Testability on July 24, 2015 by telescoperA paper came out a few weeks ago on the arXiv that’s ruffled a few feathers here and there so I thought I would make a few inflammatory comments about it on this blog. The article concerned, by Gubitosi et al., has the abstract:

I have to be a little careful as one of the authors is a good friend of mine. Also there’s already been a critique of some of the claims in this paper here. For the record, I agree with the critique and disagree with the original paper, that the claim below cannot be justfied.

…we illustrate how unfalsifiable models and paradigms are always favoured by the Bayes factor.

If I get a bit of time I’ll write a more technical post explaining why I think that. However, for the purposes of this post I want to take issue with a more fundamental problem I have with the philosophy of this paper, namely the way it adopts “falsifiablity” as a required characteristic for a theory to be scientific. The adoption of this criterion can be traced back to the influence of Karl Popper and particularly his insistence that science is deductive rather than inductive. Part of Popper’s claim is just a semantic confusion. It is necessary at some point to deduce what the measurable consequences of a theory might be before one does any experiments, but that doesn’t mean the whole process of science is deductive. As a non-deductivist I’ll frame my argument in the language of Bayesian (inductive) inference.

Popper rejects the basic application of inductive reasoning in updating probabilities in the light of measured data; he asserts that no theory ever becomes more probable when evidence is found in its favour. Every scientific theory begins infinitely improbable, and is doomed to remain so. There is a grain of truth in this, or can be if the space of possibilities is infinite. Standard methods for assigning priors often spread the unit total probability over an infinite space, leading to a prior probability which is formally zero. This is the problem of improper priors. But this is not a killer blow to Bayesianism. Even if the prior is not strictly normalizable, the posterior probability can be. In any case, given sufficient relevant data the cycle of experiment-measurement-update of probability assignment usually soon leaves the prior far behind. Data usually count in the end.

I believe that deductvism fails to describe how science actually works in practice and is actually a dangerous road to start out on. It is indeed a very short ride, philosophically speaking, from deductivism (as espoused by, e.g., David Hume) to irrationalism (as espoused by, e.g., Paul Feyeraband).

The idea by which Popper is best known is the dogma of falsification. According to this doctrine, a hypothesis is only said to be scientific if it is capable of being proved false. In real science certain “falsehood” and certain “truth” are almost never achieved. The claimed detection of primordial B-mode polarization in the cosmic microwave background by BICEP2 was claimed by some to be “proof” of cosmic inflation, which it wouldn’t have been even if it hadn’t subsequently shown not to be a cosmological signal at all. What we now know to be the failure of BICEP2 to detect primordial B-mode polarization doesn’t disprove inflation either.

Theories are simply more probable or less probable than the alternatives available on the market at a given time. The idea that experimental scientists struggle through their entire life simply to prove theorists wrong is a very strange one, although I definitely know some experimentalists who chase theories like lions chase gazelles. The disparaging implication that scientists live only to prove themselves wrong comes from concentrating exclusively on the possibility that a theory might be found to be less probable than a challenger. In fact, evidence neither confirms nor discounts a theory; it either makes the theory more probable (supports it) or makes it less probable (undermines it). For a theory to be scientific it must be capable having its probability influenced in this way, i.e. amenable to being altered by incoming data “i.e. evidence”. The right criterion for a scientific theory is therefore not falsifiability but testability. It follows straightforwardly from Bayes theorem that a testable theory will not predict all things with equal facility. Scientific theories generally do have untestable components. Any theory has its interpretation, which is the untestable penumbra that we need to supply to make it comprehensible to us. But whatever can be tested can be regared as scientific.

So I think the Gubitosi et al. paper starts on the wrong foot by focussing exclusively on “falsifiability”. The issue of whether a theory is testable is complicated in the context of inflation because prior probabilities for most observables are difficult to determine with any confidence because we know next to nothing about either (a) the conditions prevailing in the early Universe prior to the onset of inflation or (b) how properly to define a measure on the space of inflationary models. Even restricting consideration to the simplest models with a single scalar field, initial data are required for the scalar field (and its time derivative) and there is also a potential whose functional form is not known. It is therfore a far from trivial task to assign meaningful prior probabilities on inflationary models and thus extremely difficult to determine the relative probabilities of observables and how these probabilities may or may not be influenced by interactions with data. Moreover, the Bayesian approach involves comparing probabilities of competing theories, so we also have the issue of what to compare inflation with…

The question of whether cosmic inflation (whether in general concept or in the form of a specific model) is testable or not seems to me to boil down to whether it predicts all possible values of relevant observables with equal ease. A theory might be testable in principle, but not testable at a given time if the available technology at that time is not able to make measurements that can distingish between that theory and another. Most theories have to wait some time for experiments can be designed and built to test them. On the other hand a theory might be untestable even in principle, if it is constructed in such a way that its probability can’t be changed at all by any amount of experimental data. As long as a theory is testable in principle, however, it has the right to be called scientific. If the current available evidence can’t test it we need to do better experiments. On other words, there’s a problem with the evidence not the theory.

Gubitosi et al. are correct in identifying the important distinction between the inflationary paradigm, which encompasses a large set of specific models each formulated in a different way, and an individual member of that set. I also agree – in contrast to many of my colleagues – that it is actually difficult to argue that the inflationary paradigm is currently falsfiable testable. But that doesn’t necessarily mean that it isn’t scientific. A theory doesn’t have to have been tested in order to be testable.

Exciting Opportunity in Experimental Physics at the University of Sussex!

Posted in Education, The Universe and Stuff with tags Biophysics, Condensed Matter, Experimental Physics, Materials Science, University of Sussex on July 23, 2015 by telescoperJust a quick update on the news that Department of Physics & Astronomy at the University of Sussex has an exciting opportunity in the form of a brand new Chair position in Experimental Physics. The advertisement appeared on the University of Sussex website somedays ago. But it has now appeared on Nature Jobs and the Times Higher websites. It is also in today’s print edition of the Times Higher. At least I think it is. I couldn’t find a copy in W.H. Smith’s when I went there today. Obviously it has sold out because word has got out about this job!

I’m taking the liberty of reposting a description of the new position here, but for fuller details please visit the formal advertisement.

–0–

The School of Mathematical and Physical Sciences seeks to appoint a Professor in Experimental Physics in the Department of Physics & Astronomy to lead the next phase of expansion and diversification of the research portfolio within the School by establishing an entirely new research activity in laboratory-based physics.

Sufficient resources will be made available to the selected candidate to establish a new group at Sussex in their field of experimental physics including, for example, condensed matter (interpreted widely), materials science, nanophysics or biophysics. Applicants in research areas with scope for interdisciplinary collaborations with other Schools at the University of Sussex (e.g. Life Sciences, Engineering & Informatics or Brighton and Sussex Medical School) are encouraged, especially those in areas with potential for generating research impact, as defined in the context of the UK Research Excellence Framework.

The successful applicant will have a proven track-record of success in obtaining substantial external funding through research grants and/or industrial sponsorship.

The appointee will be supported with substantial (seven-figure) sum for start-up funding and an extensive newly-refurbished laboratory space. The financial package on offer will also support the appointment of at least two further experimental lectureships; the appointed professor is expected to be strongly involved in recruitment to these positions.

Informal (and confidential) enquiries may be addressed in the first instance to the Head of School, Professor Peter Coles (P.Coles@sussex.ac.uk).

Follow @telescoperThe Curious Case of the 3.5 keV “Line” in Cluster Spectra

Posted in Bad Statistics, The Universe and Stuff with tags dark matter, galaxy clusters, Katie Mack, neutrino, Particle Physics, Sterile neutrino, XMM/Newton on July 22, 2015 by telescoperEarlier this week I went to a seminar. That’s a rare enough event these days given all the other things I have to do. The talk concerned was by Katie Mack, who was visiting the Astronomy Centre and it contained a nice review of the general situation regarding the constraints on astrophysical dark matter from direct and indirect detection experiments. I’m not an expert on experiments – I’m banned from most laboratories on safety grounds – so it was nice to get a review from someone who knows what they’re talking about.

One of the pieces of evidence discussed in the talk was something I’ve never really looked at in detail myself, namely the claimed evidence of an emission “line” in the spectrum of X-rays emitted by the hot gas in galaxy clusters. I put the word “line” in inverted commas for reasons which will soon become obvious. The primary reference for the claim is a paper by Bulbul et al which is, of course, freely available on the arXiv.

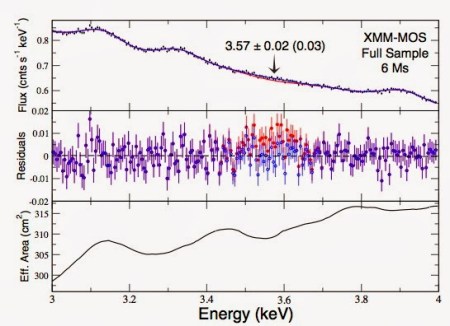

The key graph from that paper is this:

The claimed feature – it stretches the imagination considerably to call it a “line” – is shown in red. No, I’m not particularly impressed either, but this is what passes for high-quality data in X-ray astronomy!

There’s a nice review of this from about a year ago here which says this feature

is very significant, at 4-5 astrophysical sigma.

I’m not sure how to convert astrophysical sigma into actual sigma, but then I don’t really like sigma anyway. A proper Bayesian model comparison is really needed here. If it is a real feature then a plausible explanation is that it is produced by the decay of some sort of dark matter particle in a manner that involves the radiation of an energetic photon. An example is the decay of a massive sterile neutrino – a hypothetical particle that does not participate in weak interactions – into a lighter standard model neutrino and a photon, as discussed here. In this scenario the parent particle would have a mass of about 7keV so that the resulting photon has an energy of half that. Such a particle would constitute warm dark matter.

On the other hand, that all depends on you being convinced that there is anything there at all other than a combination of noise and systematics. I urge you to read the paper and decide. Then perhaps you can try to persuade me, because I’m not at all sure. The X-ray spectrum of hot gas does have a number of known emission features in it that needed to be subtracted before any anomalous emission can be isolated. I will remark however that there is a known recombination line of Argon that lies at 3.6 keV, and you have to be convinced that this has been subtracted correctly if the red bump is to be interpreted as something extra. Also note that all the spectra that show this feature are obtained using the same instrument – on the XMM/Newton spacecraft which makes it harder to eliminate the possibility that it is an instrumental artefact.

I’d be interested in comments from X-ray folk about how confident we should be that the 3.5 keV “anomaly” is real…

Follow @telescoperSoftware Use in Astronomy

Posted in Education, The Universe and Stuff with tags astronomy, Fortran, programming, Python, Software on July 21, 2015 by telescoperI just saw an interesting paper which hit the arXiv last week and thought I would share it here. It’s called Software Use in Astronomy: An Informal Survey and the abstract is here:

A couple of things are worth remarking upon. One concerns Python. Although I’m not surprised that Python is Top of the Pops amongst astronomers – like many Physics & Astronomy departments we actually teach it to undergraduates here at the University of Sussex – it is notable that its popularity is a relatively recent phenomenon and it’s quite impressive how rapidly it has caught on.

A couple of things are worth remarking upon. One concerns Python. Although I’m not surprised that Python is Top of the Pops amongst astronomers – like many Physics & Astronomy departments we actually teach it to undergraduates here at the University of Sussex – it is notable that its popularity is a relatively recent phenomenon and it’s quite impressive how rapidly it has caught on.

Another interesting thingis the continuing quite heavy use of Fortran. Most computer scientists would consider this to be an obsolete language, and is presumably mainly used because of inertia: some important and well established codes are written in it and presumably it’s too much effort to rewrite them from scratch in something more modern. I would have thought that Fortran would have been used primarily by older academics, i.e. old dogs who can’t learn new programming tricks. However, that doesn’t really seem to be the case based on the last sentence of the abstract.

Finally, it’s quite surprising that over 40% of astronomers claim to have had no training in software development. We do try to embed that particular skill in graduate programmes nowadays, but it seems that doesn’t always work!

Anyway, do read the paper yourself. It’s very interesting. Any further comments through the box below please, but please ensure they compile before submitting them…

Follow @telescoper

Astronomy: One of the Seven Liberal Arts

Posted in Art, Education, History, The Universe and Stuff with tags Giovanni dal Ponte, Quadrivium, Seven Liberal Arts, Trivium on July 20, 2015 by telescoperThis morning I came across this picture (via @hist_astro on Twitter):

It is by Giovanni dal Ponte and was painted in or around 1435; the original is in the Museo Nacional del Prado in Madrid. It depicts the Seven Liberal Arts which, in antiquity were considered the essential elements of the education system. The Arts concerned are: Grammar, Rhetoric, Dialectic, Astronomy, Arithmetic, Geometry and Music. Appropriately enough, Astronomy is in the middle.

It is by Giovanni dal Ponte and was painted in or around 1435; the original is in the Museo Nacional del Prado in Madrid. It depicts the Seven Liberal Arts which, in antiquity were considered the essential elements of the education system. The Arts concerned are: Grammar, Rhetoric, Dialectic, Astronomy, Arithmetic, Geometry and Music. Appropriately enough, Astronomy is in the middle.

I suspect some of you may have noticed that there are more than seven figures in the painting. That’s because each of the Liberal Arts is itself represented by a (female) figure, presumably a Goddess, and also a famous character associated with the particular discipline. Second from the right, for example, you can see Arithmetic accompanied by Pythagoras, who seems to be trying to copy from her notebook. Astronomy. In the centre, kneeling at the feet of Urania (the muse of Astronomy) is Ptolemy..

It’s quite interesting to look at the structure of a Liberal Arts education as it would be in classical antiquity. The first three subjects (Grammar, Rhetoric and Dialectics) formed the Trivium (from which we get the English word “trivial”). “Grammar” means the science of the correct usage of language, knowledge and understanding of which helps a person to speak and write correctly; “Dialectic” basically means “logic”, the science of rational thinking as a means of arriving at the truth; and “Rhetoric” the science of expression, especially persuasion, which includes ways of organizing and presenting an argument so that people will understand and hopefully believe it. These may have been considered trivial in ancient times, but I can’t help thinking that we could do with a lot more emphasis on such fundamental skills in the modern curriculum.

After the Trivium came the Quadrivium: Arithmetic, Geometry, Astronomy and Music all of which were considered to be disciplines connected with Mathematics. Presumably these are the non-trivial subjects. We might nowadays consider Astronomy to be a mathematical subject – indeed in the United Kingdom astronomy was until relatively recently generally taught in mathematics departments, even after the rise of astrophysics in the 19th Century. On the other hand, fewer would nowadays would recognize music as being essentially mathematical in nature. Historically, however the connections between music, mathematics and natural philosophy were many and profound.

Of course there are now many other disciplines and it would be impossible for any education to encompass all fields of study, but I do think that it’s a shame that modern education systems are so lacking in breadth, as they tend to emphasize the differences between subjects rather than what they all have in common.

Follow @telescoperHonoris Causa: John Francis, Inventor of the QR Algorithm

Posted in The Universe and Stuff with tags eigenvalues, eigenvectors, John Francis, John G.F. Francis, mathematics, QR algorithm, Sanjeev Bhaskar on July 18, 2015 by telescoperIt’s been yet another busy week, trying to catch up on things I missed last week as well as preparing for Thursday’s graduation ceremony for students from the School of Mathematical and Physical Sciences. At this year’s ceremony, as well as reading out the names of graduands from the School of which I am Head, I also had the pleasant duty of presenting mathematician John G.F. Francis for an Honorary Doctorate of Science.

The story of John Francis is a remarkable one which I hope you will agree if you read the following brief account which is adapted from the oration I delivered at the ceremony. It was a special pleasure to asked to present this award because you could never wish to meet a more modest or self-effacing individual. Indeed, when I asked him at the lunch following the ceremony, what he thought of the work for which he had been awarded a degree honoris causa he shrugged it off, and said that he thought it was an obvious thing to do and anyone else could have done it had they thought of it. Maybe that’s true in hindsight, but the point is that “they” didn’t and “he” did. The fact that it has taken over fifty years for him to be recognized for something so important is regrettable to say the least, but I am glad to have been there to see him justifiably honoured. Great thanks are due to Drs Omar Lakkis and Anotida Madzvamuse of the Department of Mathematics at the University of Sussex for bringing his case to the attention of the University as eminently suitable for such an honour. So impressed were the graduating students that a number shook his hand as they passed him on the stage during their own part of the ceremony. I’ve never seen that happen before!

John Francis is a pioneer in the field of mathematical computation where his name is more-or-less synonymous with the so-called “QR algorithm”, an ingenious factorization procedure used to calculate the eigenvalues and eigenvectors of linear operators (represented as matrices).

Before I go on it’s probably worth explaining that the letters ‘QR’ don’t stand for any words in particular. The algorithm involves decomposing the matrix whose eigenvalues are required into the product of an orthogonal matrix (which Francis happened to call Q) and an upper-triangular matrix (which Francis happened to call R). In fact in his original manuscript, the orthogonal matrix was called O but it was subsequently changed to avoid confusion with ‘O’. At any rate, certainly has nothing to do with research funding!

The mathematics and physics graduates in the audience were probably well aware of the importance of eigenvalue problems, which crop up in a huge variety of contexts in these and other scientific disciplines, from geometry to graph theory to quantum mechanics to geology to molecular structure to statistics to engineering; the list is almost endless. Indeed here can be few people working in such fields who haven’t at one time or another turned to the QR algorithm in the course of their calculations. I know I have, in my own field of astrophysics! It has become a standard component of any theoretician’s mathematical toolkit because of its numerical stability.

The algorithm was first derived by John Francis in two papers published in 1959 and, independently a couple of years later, by the Russian mathematician Vera Kublanovskaya (who passed away in 2012). You can find both the papers online: here and here. Interestingly, the problem that John Francis was trying to solve when he devised the QR algorithm concerned the “flutter” or vibrations of aircraft wings.

But it is in the world of the World Wide Web that the QR algorithm has had perhaps its greatest impact. Many of us who were using the internet in 1998 were astonished when Google arrived on the scene because it was so much faster and more effective than all the other search engines available at the time. The secret of this success was the PageRank algorithm (named after Larry Page, one of the founders of Google) which involved applying the QR decomposition to calculate numerical factors expressing the relative “importance” of elements within a linked set (such as pages on the World Wide Web) measured by the nature of their links to other elements. The QR algorithm is not the only technique exploited by Google, but it is safe to say that it is what gave Google its edge.

The achievements of John Francis are indeed impressive, even more so when you read his biography, for he did all this pioneering work in numerical analysis without even having an undergraduate degree in Mathematics.

John Francis actually left school in 1952 and obtained a place at Christ’s College, Cambridge for entry in 1955, after two years of National Service during which he served in Germany and Korea with the Royal Artillery. On leaving the army in 1954 he worked for a time at the National Research Development Corporation which was set up in 1948 by the Attlee government in order to facilitate the transfer of new technologies developed during World War 2 into the private sector in an effort to boost British commerce and industry. Among the priority areas covered by the NRDC was computing, and it was there that John Francis cut his teeth in the field of numerical analysis. He went to University as planned but did not complete his degree, instead returning to the NRDC in 1956 after less than a year of study. It was while working there in 1958 and 1959 that he devised the QR algorithm.

He left the NRDC in 1961 to work at Ferranti Ltd after which, in 1967, he moved to Brighton and took up a position at the University of Sussex in the Laboratory of Experimental Psychology, helping to devise a new computer language for running experiments. He left the University in 1972 to work in various private sector computer service companies in Sussex. He has now retired but still lives locally, in Hove.

Having left the field of numerical analysis in the 1960s, John Francis had absolutely no idea of the impact his work on the QR algorithm had had, nor was he aware that it was widely recognized as one of the Top Ten Algorithms of the Twentieth Century, until he was traced and contacted in 2007 by the organizers of a mini-symposium that was being planned to celebrate 50 years of the QR algorithm; he was the opening speaker at that meeting in Glasgow when it took place in 2009.

More recently still, in 2011, after what he describes as “sporadic” study over many years, John Francis was awarded an undergraduate degree from the Open University, 56 years after he started one at Cambridge. I am very glad that there was no similar delay in him proceeding to a Doctorate!

Follow @telescoperPhysics is more than applied mathematics

Posted in Education, The Universe and Stuff with tags Experiment, mathematics, Physics, Theory on July 15, 2015 by telescoperI thought rather hard before reblogging this, as I do not wish to cause any conflict between the different parts of my School – the Department of Mathematics and the Department of Physics and Astronomy!

I don’t think I really agree that Physics is “more” than Applied Mathematics, or at least I would put it rather differently. Physics and Mathematics intersect, but there are parts of mathematics that are not physical and parts of physics that are not mathematical.

Discuss.

A problem set for potential applicants in the foyer of the Physics department of a premier UK university. It looks like physics, but it is in fact maths. The reason is that in the context of this problem, the string cannot pull a particle along at all unless it stretches slightly. Click the image for a larger diagram.

A problem set for potential applicants in the foyer of the Physics department of a premier UK university. It looks like physics, but it is in fact maths. The reason is that in the context of this problem, the string cannot pull a particle along at all unless it stretches slightly. Click the image for a larger diagram.

While accompanying my son on an Open Day in the Physics Department of a premier UK university, I was surprised and appalled to be told that Physics ‘was applied mathematics‘.

I would just like to state here for the record that Physics is notapplied mathematics.

So what’s the difference exactly?

I think there are two linked, but subtly distinct, differences.

1. Physics is a science and mathematics is not.

This means that physics has an experimental aspect. In physics, it is possible to disprove a hypothesis by experiment: this cannot be done in maths.

2. Physics is about…

View original post 256 more words