Recent changes to the criteria for allocating research funding require particle physicists and astronomers to justify the wider social, cultural and economic impact of their science. In view of the directive to engage in work more directly relevant to the person in the street, I’ve decided to share with you my latest results, which involve the application of ideas from theoretical physics in the wider field of human activity. That is, if you’re one of those people who likes to have sex in a field.

In the simplest theories of the sexual interaction, the eigenstates of the Hamiltonian describing all allowed forms of two-body coupling are identified with the conventional gender states, “Male” and “Female” denoted |M> and |F> in the Dirac bra-ket notation; note that the bra is superfluous in this context so, as usual, we dispense with it at the outset. Interactions between |M> and |F> states are assumed to be attractive while those between |M> and |M> or |F> and |F> are supposed either to be repulsive or, in some theories, entirely forbidden.

Observational evidence, however, strongly suggests that two-body interactions involving either F-F or M-M coupling, though suppressed in many situations, are by no means ruled out in the manner one would expect from the simplest theory outlined above. Furthermore, experiments indicate that the relevant channel for M-M interactions appears to have a comparable cross-section to that of the standard M-F variety, so a similar form of tunneling is presumably involved. This suggests that a more complete theory could be obtained by a relatively simple modification of the version presented above.

Inspired by the recent Nobel prize awarded for the theory of quark mixing, we are now able to present a new, unified theory of the sexual interaction. In our theory the “correct” eigenstates for sexual behaviour are not the conventional |M> and |F> gender states but linear combinations of the form

|M>=cosθ|S> + sinθ|G>

|F>=-sinθ|G>+cosθ|S>

where θ is the Cabibbo mixing angle or, more appropriately in this context, the sexual orientation (measured in degrees). Extension to three states is in principle possible (but a bit complicated) and we will not discuss this issue further.

In this theory each |M> or |F> state is regarded as a linear combination of heterosexual (straight, S) and homosexual (gay, G) states represented by a rotation of the basis by an angle θ, exactly the same mechanism that accounts for the charge-changing weak interactions between quarks.

For a purely heterosexual state, this angle is zero, in which case we recover the simple theory outlined above. At θ=90° only the G component manifests itself; in this state only classically forbidden interactions are permitted. The general state is however, one with a value of the orientation angle somewhere between these two limits and this permits all forms of interaction, at least with some probability.

Note added in proof: the |G> states do not appear in standard QFT but are motivated by some versions of string theory, expecially those involving G-strings.

One immediate consequence of this theory is that a “pure” gender state should be generally regarded as a quantum superposition of “straight” and “gay” states. This differs from a classical theory in that the true state can not be known with certainty; only the relative frequency of straight and gay behaviour (over a large number of interactions) can be predicted, perhaps explaining the large number of married men to be found on gaydar. The state at any given time is thus entirely determined by a sum over histories up to that moment, taking into account the appropriate action. In the Copenhagen interpretation, collapse one way or another occurs only when a measurement is made (or when enough Carlsberg is drunk).

If there is a difference in energy of the basis states a pure |M> state can oscillate between |S> and |G> according to a time-dependent phase factor arising when the two states interfere with each other:

|M(t)>=cosθ|S>exp(-iE1t) + sinθ|G>exp(-iE2t);

(obviously we are using natural units here, so that it all looks cleverer than it actually is). This equation is the origin of the expressions “it’s just a phase he’s going through” and “he swings both ways”. In physics parlance this means that the eigenstates of the sexual interaction do not coincide with the conventional gender types, indicating that sexual behaviour is not necessarily time-invariant for a given body.

Whether single-body phenomena (i.e. self-interactions) can provide insights into this theory depends, as can be seen from the equation, on the energies of the relevant states (as is also the case in neutrino oscillations). If they are equal then there is no oscillation. However, a detailed discussion of the role of degeneracy is beyond the scope of this analysis.

Self- interactions involving a solitary phase are generally difficult to observe, although examples have been documented that involve short-lived but highly-excited states accompanied by various forms of stimulated emission. Unfortunately, however, the resulting fluxes are not often well measured. This form of interaction also appears to be the current preoccupation of string theorists.

More definitive evidence for the theory might emerge from situations involving some form of entanglement, such as in the examples of M-M and F-F coupling mentioned above. Non-local interactions of a sexual type are possible in principle, but causality and simultaneity issues exist and most researchers consequently prefer to focus on local interactions, which are generally supposed to be more satisfactory from the point-of-view of reproducibility.

Although the theory is qualitatively successful we need more experimental data to pin down the parameters needed for a robust fit. It is not known, for example, whether the rates of M-M and F-F coupling are similar or, indeed, whether the peak intensity of these interactions, when resonance is reached, is similar to those of the standard M-F form. It is generally accepted, however, that the rate of decay from peak intensity is rather slower for processes involving |F> states than for|M> which is not so easy to model in this theory, although with a bit of renormalization we can probably explain anything.

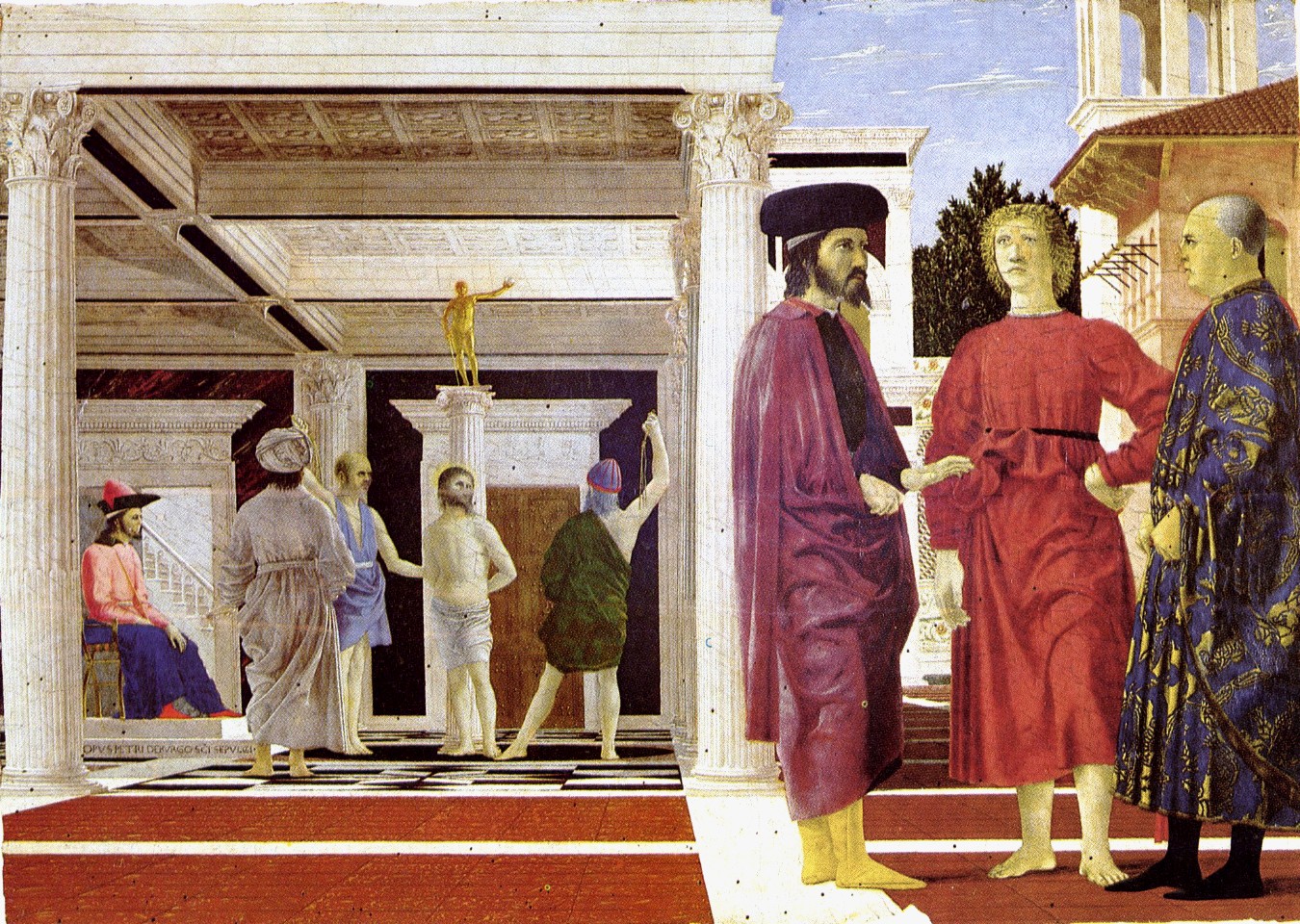

Answers to these questions can perhaps be gleaned from observations of many-body processes (i.e. those with N≥3), especially if they involve a multiplicity of hardon states (i.e. collective excitations). Only these permit a full exploration of all possible degrees of freedom, although higher-order Feynman diagrams are needed to depict them and they require more complicated group theoretical techniques. Examples like the one shown above – representing a threesome – are not well understood, but undoubtedly contribute significantly to the bi-spectrum.

Answers to these questions can perhaps be gleaned from observations of many-body processes (i.e. those with N≥3), especially if they involve a multiplicity of hardon states (i.e. collective excitations). Only these permit a full exploration of all possible degrees of freedom, although higher-order Feynman diagrams are needed to depict them and they require more complicated group theoretical techniques. Examples like the one shown above – representing a threesome – are not well understood, but undoubtedly contribute significantly to the bi-spectrum.

One might also speculate that in these and other highly excited states, the sexual interaction may be described by something more like the electroweak theory in which all forms of interaction occur in a much more symmetric fashion and at much higher rates than at lower energies. That sounds like some kind of party…

It is worth remarking that there may be finer structure than this model takes into account. For example, the |G> state is generally associated with singlet configurations like those shown on the right. However, G-G coupling is traditionally described in terms of “top” |t> and “bottom” |b> states, with b-t coupling the preferred mode, leading to the possibility of doublets or even triplets. It may be even prove necessary to introduce a further mixing angle φ of the form

It is worth remarking that there may be finer structure than this model takes into account. For example, the |G> state is generally associated with singlet configurations like those shown on the right. However, G-G coupling is traditionally described in terms of “top” |t> and “bottom” |b> states, with b-t coupling the preferred mode, leading to the possibility of doublets or even triplets. It may be even prove necessary to introduce a further mixing angle φ of the form

|G>=cosφ |t> + sinφ |b>

so that the general state of |G> is “versatile”. However, whether G-G interactions can be adequately described even in this extended theory is a matter for debate until the intensity of t-t and b-b coupling is more accurately measured.

Finally, we should like to point out the difference between our model and that of the usual quark sextet, in which interacting states are described in terms of three pairs: the bottom (b) and top (t) which we have mentioned already; the strange (s) and charmed (c); and the up (u) and down (d). While it is clear that |b> and |t> do exhibit strong interactions and it appears plausible that |s> and |c> might do likewise, the sexual interaction clearly breaks the isospin symmetry between the |u> and the |d> in both M-M and M-F cases. The “up” state is definitely preferred in all forms of coupling and, indeed, the “down” has only ever been known to engage in weak interactions.

We have recently submitted an application to the Science and Technology Facilities Council for a modest sum (£754 million) to build a large-scale UK facility in order to carry out hands-on experimental tests of some aspects of the theory. We hope we can rely on the support of the physics community in agreeing to close down their labs and quit their jobs in order to release the funding needed to support it.

It is worth remarking that there may be finer structure than this model takes into account. For example, the |G> state is generally associated with

It is worth remarking that there may be finer structure than this model takes into account. For example, the |G> state is generally associated with

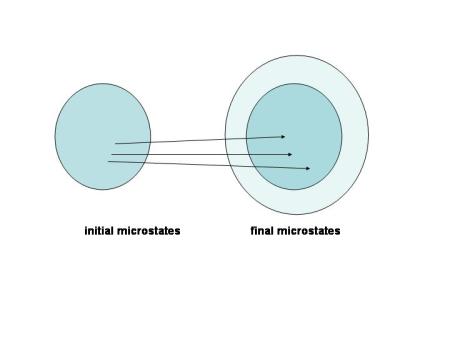

The connection between thermodynamics (which deals with macroscopic quantities) and statistical mechanics (which explains these in terms of microscopic behaviour) is a fascinating but troublesome area.

The connection between thermodynamics (which deals with macroscopic quantities) and statistical mechanics (which explains these in terms of microscopic behaviour) is a fascinating but troublesome area.