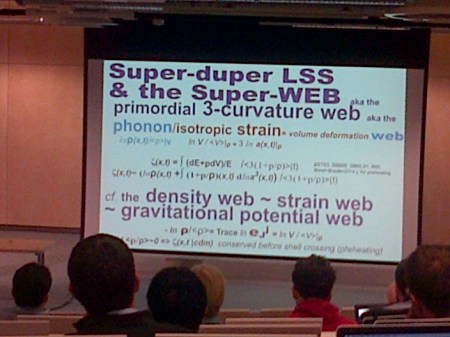

Given the recent discussion in comments on this blog I thought I’d give a brief update on the issue of the scale of cosmic homogeneity; I’m going to repeat some of the things I said in a post earlier this week just to make sure that this discussion is reasonable self-contained.

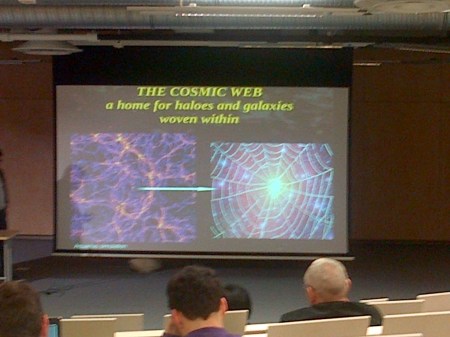

Our standard cosmological model is based on the Cosmological Principle, which asserts that the Universe is, in a broad-brush sense, homogeneous (is the same in every place) and isotropic (looks the same in all directions). But the question that has troubled cosmologists for many years is what is meant by large scales? How broad does the broad brush have to be? A couple of presentations discussed the possibly worrying evidence for the presence of a local void, a large underdensity on scale of about 200 MPc which may influence our interpretation of cosmological results.

I blogged some time ago about that the idea that the Universe might have structure on all scales, as would be the case if it were described in terms of a fractal set characterized by a fractal dimension . In a fractal set, the mean number of neighbours of a given galaxy within a spherical volume of radius

is proportional to

. If galaxies are distributed uniformly (homogeneously) then

, as the number of neighbours simply depends on the volume of the sphere, i.e. as

, and the average number-density of galaxies. A value of

indicates that the galaxies do not fill space in a homogeneous fashion:

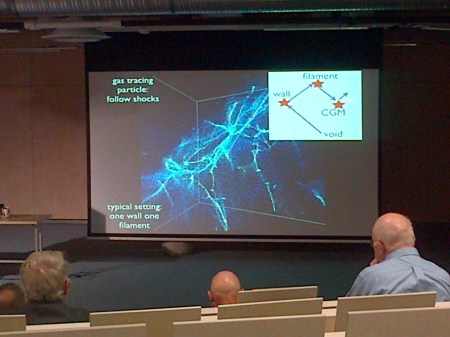

, for example, would indicate that galaxies were distributed in roughly linear structures (filaments); the mass of material distributed along a filament enclosed within a sphere grows linear with the radius of the sphere, i.e. as

, not as its volume; galaxies distributed in sheets would have

, and so on.

We know that on small scales (in cosmological terms, still several Megaparsecs), but the evidence for a turnover to

has not been so strong, at least not until recently. It’s just just that measuring

from a survey is actually rather tricky, but also that when we cosmologists adopt the Cosmological Principle we apply it not to the distribution of galaxies in space, but to space itself. We assume that space is homogeneous so that its geometry can be described by the Friedmann-Lemaitre-Robertson-Walker metric.

According to Einstein’s theory of general relativity, clumps in the matter distribution would cause distortions in the metric which are roughly related to fluctuations in the Newtonian gravitational potential by

, give or take a factor of a few, so that a large fluctuation in the density of matter wouldn’t necessarily cause a large fluctuation of the metric unless it were on a scale

reasonably large relative to the cosmological horizon

. Galaxies correspond to a large

but don’t violate the Cosmological Principle because they are too small in scale

to perturb the background metric significantly.

In my previous post I left the story as it stood about 15 years ago, and there have been numerous developments since then, some convincing (to me) and some not. Here I’ll just give a couple of key results, which I think to be important because they address a specific quantifiable question rather than relying on qualitative and subjective interpretations.

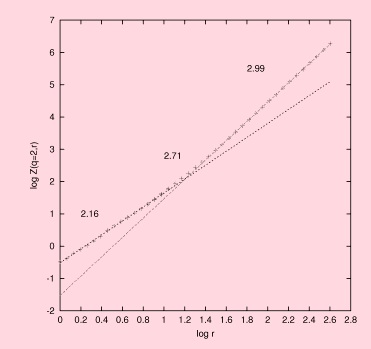

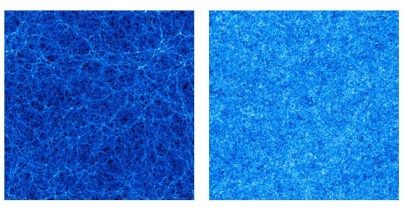

The first, which is from a paper I wrote with my (then) PhD student Jun Pan, demonstrated what I think is the first convincing demonstration that the correlation dimension of galaxies in the IRAS PSCz survey does turn over to the homogeneous value on large scales:

You can see quite clearly that there is a gradual transition to homogeneity beyond about 10 Mpc, and this transition is certainly complete before 100 Mpc. The PSCz survey comprises “only” about 11,000 galaxies, and it relatively shallow too (with a depth of about 150 Mpc), but has an enormous advantage in that it covers virtually the whole sky. This is important because it means that the survey geometry does not have a significant effect on the results. This is important because it does not assume homogeneity at the start. In a traditional correlation function analysis the number of pairs of galaxies with a given separation is compared with a random distribution with the same mean number of galaxies per unit volume. The mean density however has to be estimated from the same survey as the correlation function is being calculated from, and if there is large-scale clustering beyond the size of the survey this estimate will not be a fair estimate of the global value. Such analyses therefore assume what they set out to prove. Ours does not beg the question in this way.

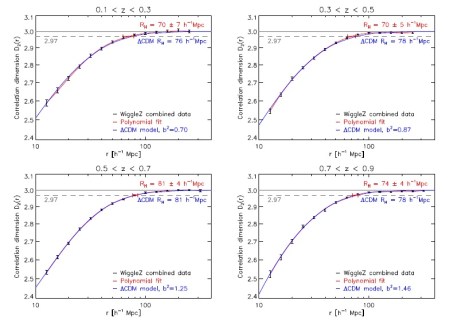

The PSCz survey is relatively sparse but more recently much bigger surveys involving optically selected galaxies have confirmed this idea with great precision. A particular important recent result came from the WiggleZ survey (in a paper by Scrimgeour et al. 2012). This survey is big enough to look at the correlation dimension not just locally (as we did with PSCz) but as a function of redshift, so we can see how it evolves. In fact the survey contains about 200,000 galaxies in a volume of about a cubic Gigaparsec. Here are the crucial graphs:

I think this proves beyond any reasonable doubt that there is a transition to homogeneity at about 80 Mpc, well within the survey volume. My conclusion from this and other studies is that the structure is roughly self-similar on small scales, but this scaling gradually dissolves into homogeneity. In a Fractal Universe the correlation dimension would not depend on scale, so what I’m saying is that we do not live in a fractal Universe. End of story.

Follow @telescoper