I just came across this amazing visualisation of the recent Earthquake in Japan, created using GPS readings from a network called GEONET. The video shows the horizontal (left) and vertical (right) displacements recorded when the Earthquake struck. For more information and images, see here.

Archive for the The Universe and Stuff Category

The Day the Earth Didn’t Stand Still..

Posted in The Universe and Stuff with tags Earthquake, GPS, Japan on March 19, 2011 by telescoperWhat Counts as Productivity?

Posted in Bad Statistics, Science Politics, The Universe and Stuff with tags ADS, astronomy, bibliometric, UKIDSS, UKIRT on March 18, 2011 by telescoperApparently last year the United Kingdom Infra-Red Telescope (UKIRT) beat its own personal best for scientific productivity. In fact here’s a graphic showing the number of publications resulting from UKIRT to make the point:

The plot also demonstrates that a large part of recent burst of productivity has been associated with UKIDSS (the UKIRT Infrared Deep Sky Survey) which a number of my colleagues are involved in. Excellent chaps. Great project. Lots of hard work done very well. Take a bow, the UKIDSS team!

Now I hope I’ve made it clear that I don’t in any way want to pour cold water on the achievements of UKIRT, and particularly not UKIDSS, but this does provide an example of how difficult it is to use bibliometric information in a meaningful way.

Take the UKIDSS papers used in the plot above. There are 226 of these listed by Steve Warren at Imperial College. But what is a “UKIDSS paper”? Steve states the criteria he adopted:

A paper is listed as a UKIDSS paper if it is already published in a journal (with one exception) and satisfies one of the following criteria:

1. It is one of the core papers describing the survey (e.g. calibration, archive, data releases). The DR2 paper is included, and is the only paper listed not published in a journal.

2. It includes science results that are derived in whole or in part from UKIDSS data directly accessed from the archive (analysis of data published in another paper does not count).

3. It contains science results from primary follow-up observations in a programme that is identifiable as a UKIDSS programme (e.g. The physical properties of four ~600K T dwarfs, presenting Spitzer spectra of cool brown dwarfs discovered with UKIDSS).

4. It includes a feasibility study of science that could be achieved using UKIDSS data (e.g. The possiblity of detection of ultracool dwarfs with the UKIRT Infrared Deep Sky Survey by Deacon and Hambly).Papers are identified by a full-text search for the string ‘UKIDSS’, and then compared against the above criteria.

That all seems to me to by quite reasonable, and it’s certainly one way of defining what a UKIDSS paper is. According to that measure, UKIDSS scores 226.

The Warren measure does, however, include a number of papers that don’t directly use UKIDSS data, and many written by people who aren’t members of the UKIDSS consortium. Being picky you might say that such papers aren’t really original UKIDSS papers, but are more like second-generation spin-offs. So how could you count UKIDSS papers differently?

I just tried one alternative way, which is to use ADS to identify all refereed papers with “UKIDSS” in the title, assuming – possibly incorrectly – that all papers written by the UKIDSS consortium would have UKIDSS in the title. The number returned by this search was 38.

Now I’m not saying that this is more reasonable than the Warren measure. It’s just different, that’s all. According to my criterion however UKIDSS measures 38 rather than 226. It sounds less impressive (if only because 38 is a smaller number than 226), but what does it mean about UKIDSS productivity in absolute terms?

Not very much, I think is the answer.

Yet another way you might try to judge UKIDSS using bibliometric means is to look at its citation impact. After all, any fool can churn out dozens of papers that no-one ever reads. I know that for a fact. I am that fool.

But citation data also provide another way of doing what Steve Warren was trying to measure. Presumably the authors of any paper that uses UKIDSS data in any significant way would cite the main UKIDSS survey paper led by Andy Lawrence (Lawrence et al. 2007). According to ADS, the number of times this has been cited since publication is 359. That’s higher than the Warren measure (226), and much higher than the UKIDSS-in-the-title measure (38).

So there we are, three different measures, all in my opinion perfectly reasonable measures of, er, something or other, but each giving a very different numerical value. I am not saying any is misleading or that any is necessarily better than the others. My point is simply that it’s not easy to assign a numerical value to something that’s intrinsically difficult to define.

Unfortunately, it’s a point few people in government seem to be prepared to acknowledge.

Andy Lawrence is 57.

Why’s the Sun not Green?

Posted in The Universe and Stuff with tags Astrophysics, black-body radiation, chlorophyll, green, stellar spectra on March 11, 2011 by telescoperIt’s Friday afternoon and time for a mildly frivolous post.

I’ve been recently been teaching first-year astrophysics students (and others) about the radiation emitted by stars, and how stellar spectra can be used to diagnose their physical properties.

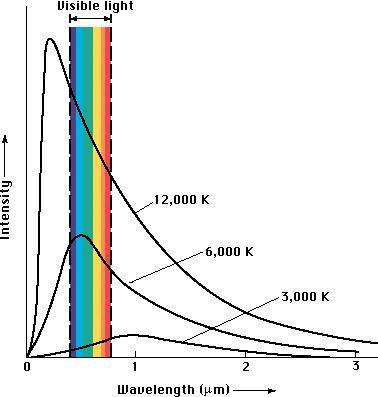

Received wisdom is that the continuous spectrum of light emitted by stars like the Sun is roughly of black-body form, with a peak wavelength inversely proportional to the surface temperature of the star. Here are some examples of black-body curves to illustrate the point.

The Sun has a surface temperature of about 6000 K – actually, more like 5800 K but we won’t quibble. The peak wavelength for the Sun’s spectrum therefore corresponds to bluey-green light, which is why the Sun appears … er… yellow.

Anyone care to offer an explanation as to why the Sun isn’t green? Answers on a postcard or, preferably, through the comments box.

And while you’re at it, you might want to comment on why, if the Sun produces so much green light, chlorophyll is actually green?

Sentimental Education

Posted in Education, The Universe and Stuff with tags education, Lecturing, Particle Physics, Physics on March 10, 2011 by telescoperWe’ve now reached the half-way point of the Spring Semester, which means that my teaching load has just doubled; I do the “Particle” bit of a third-year module on “Nuclear and Particle Physics”, which means I have 11 lectures from now until the end of the Semester to tell the students everything I know about particle physics. More than enough time.

Anyway, the first lecture today, as it was last year, was all about Natural Units. I always find it fun doing this, partly because the students stare at me as if I’ve taken leave of my senses. Come to think of it, they do that anyway.

The other night I was having a drink with some colleagues after work. Various topics came up, but we spent a bit of time talking about teaching. It appears that I’m in a small minority of my physics colleagues in that I actually like teaching. In fact, the older I’ve got the more I enjoy it. There’s always a limit, of course, and I wouldn’t like to do so much teaching that I couldn’t do other things, especially research, but I wouldn’t like to be in a job that didn’t involve teaching at all. I think most of my colleagues would jump at the chance to abandon teaching altogether. I can’t understand that attitude, mainly because I find it so rewarding myself, but I’m in a minority of one about so many things nowadays that I’ve ceased worrying about it.

I do sometimes wonder why I find teaching so rewarding. Perhaps it’s because I’m already middle-aged and don’t have any kids of my own. Teaching at least gives me a chance to play some sort of a role in someone else’s development as a person. I can’t guarantee that it’s necessarily a positive role, but there you are. Another thing is that sometimes when I travel about at conferences and whatnot I get to meet people I taught years ago. It means a lot when they say they remember the lectures, especially if they’ve now embarked on scientific careers of their own.

One of the problems of the government’s push for greater concentration of research funds and the simultaneous slashing of teaching budgets is that the quality of University teaching is bound to suffer. If research funding is allocated only to self-styled research “superstars” then Universities will obviously spare them from other duties. Teaching loads for ordinary foot soldiers will increase, with obvious consequences in decreasing enthusiasm among lecturing staff.

It’s already the case that teaching is grossly undervalued, and it’s probably worse in physics departments than anywhere else because, without research funding, most would simply go bust. Teaching funding is nowhere near sufficient to cover the real cost of a physics degree and in any case we can’t deliver advanced physics training without access to the research labs.

On top of this there’s the way teaching is entirely disregarded in promotion cases. On paper, promotion to Professor requires demonstrated commitment to teaching. In reality, all that committees care about is how much research income the candidate brings in. Excellence in teaching counts very little, if anything at all, in the assessment of a promotion case. I think this situation must change, especially with tuition fees set to rise to unprecedented levels, but all the forces currently at play are acting in precisely the wrong direction.

If we concentrate physics research funding any further then we’ll have a small number of rich institutions stuffed full of research professors whom the undergraduates never see. The less successful academics in these departments will be put on teaching-only contracts, not because they like teaching but because their alternative is Her Majesty’s Dole. Meanwhile, less favoured research labs – i.e. those who don’t get lucky in the REF – won’t be able to sustain world-class research or teaching activities and will be forced to shut up shop. Further research concentration is bad news all round for the higher education system.

But I digress.

One of the other things we talked about in the pub was the National Lottery. As regular readers of this blog might know, I put the princely sum of £1 on the lottery every Saturday. Some think this is strange, but I see it partly as one of those little rituals we all invent for ourselves and partly as a small price to pay for a little frisson of excitement when the numbers are drawn.

But I do sometimes wonder what on Earth I would do if I won a multi-million pound jackpot prize. Would I quit my job? Would I quit teaching? Actually, I’m not sure I would do either of those. If I could ditch the admin stuff, I would of course do so. I don’t have a car and have no interest in getting one, especially a fancy one. I don’t need a bigger house, or a yacht. In fact, frankly, there’s nothing that I would really want to buy that I couldn’t buy already. It’s not that I have a huge salary, just that I’m not exactly very materialistic.

So even if I were rich I’d probably carry on doing pretty much what I do now. And that thought brings home just how lucky we are, those of us working in academia. For all the frustrations, the fact remains that we are fortunate to be getting paid for things that we enjoy doing.

Or am I just a sentimental old fool?

Anyway, I feel a poll coming on…

Aurora Borealis

Posted in Poetry, The Universe and Stuff with tags Aurora Borealis, Herman Melville on March 8, 2011 by telescoperWhat power disbands the Northern Lights

After their steely play?

The lonely watcher feels an awe

Of Nature’s sway,

As when appearing,

He marked their flashed uprearing

In the cold gloom–

Retreatings and advancings,

(Like dallyings of doom),

Transitions and enhancings,

And bloody ray.

The phantom-host has faded quite,

Splendor and Terror gone

Portent or promise–and gives way

To pale, meek Dawn;

The coming, going,

Alike in wonder showing–

Alike the God,

Decreeing and commanding

The million blades that glowed,

The muster and disbanding–

Midnight and Morn.

by Herman Melville (1819-1891)

A First Problem in Astrophysics

Posted in Education, The Universe and Stuff with tags astronomy, iron, iron sun hypothesis, Physics, Sun on February 25, 2011 by telescoperWhen I first arrived at Cambridge University (nearly 30 years ago) to begin my course in Natural Sciences, eventually leading to a specialism in Physics, one of the books we were all asked to buy was the Cavendish Problems in Physics. One of the first problems I had to solve for tutorial work was from that collection, and I have been setting it (in a slightly amended form) for my own students ever since I started lecturing. I thought I’d put it up here because I think there might be a few budding theoretical astrophysicists who’ll find it interesting and because it provides a simple refutation of a crazy theory that has been doing the rounds on Twitter all morning.

I like this problem because it involves a little bit of lateral thinking, because not all the information given seems immediately relevant to the question being asked, but you can get a long way by just writing down the pieces of information given and thinking about how you might use simple physical ideas to connect them to the answer.

If you haven’t seen this problem before, why not have a go?

Using only the information given in this Question, estimate the ratio of the mean densities of the Earth and Sun:

i) the angular diameter of the Sun as seen from Earth is half a degree

ii) the length of 1° of latitude on the Earth’s surface is 100km

iii) the length of a year is 3×107 seconds

iv) the acceleration due to gravity at the Earth’s surface is 10 m s-2.

HINT: You do not need to look up anything else, not even G!

The answer you should get is that the mean density of the Earth is something like 3.5 times that of the Sun, although the information given in the question isn’t all that accurate.

In fact the mean density of the Earth is about 5500 kg per cubic metre, and that of the Sun is about 1400 kg per cubic metre; the average density of the Sun is just 40% higher than water, which is perhaps surprising to the uninitiated….

The density of solid iron on the other hand is about 7900 kg per cubic metre, and even higher than that if it is compressed…

UPDATE: I’ve added my Solution.

![]()

Which side (of the Einstein equations) are you on?

Posted in The Universe and Stuff with tags cosmological constant, Cosmology, Dark Energy, Einstein equations, energy-momentum tensor, General Relavity, vacuum energy on February 22, 2011 by telescoperAs a cosmologist, I am often asked why it is that people talk about the cosmological constant as if it were some sort of vacuum energy or “dark energy“. I was explaining it again to a student today so I thought I’d jot something down here so I can use it for future reference. In a nutshell, it goes like this. The original form of Einstein’s equations for general relativity can be written

The precise meaning of the terms on the left hand side doesn’t really matter, but basically they describe the curvature of space-time and are derived from the Ricci tensor and the metric tensor

; this is how Einstein’s theory expresses the effect of gravity warping space. On the right hand side we have the energy-momentum tensor (sometimes called the stress tensor)

, which describes the distribution of matter and its motion. Einstein’s equations can be summarised in John Archibald Wheeler’s pithy phrase: “Space tells matter how to move; matter tells space how to curve”.

In standard cosmology we usually assume that we can describe the matter-energy content of the Universe as a uniform perfect fluid, for which the energy-momentum tensor takes the simple form

in which is the pressure and

the density;

is the fluid’s 4-velocity.

Einstein famously modified (or perhaps generalised) the original equations by adding a cosmological constant term to the left hand side thus:

Doing this essentially modifies the description of gravity, or appears to do so because it happens to be written on the left hand side of the equation. In fact one could equally well move the term involving to the other side and absorb it into a redefined energy-momentum tensor,

:

The new energy-momentum tensor needed to make this work is of the form

where

So the cosmological constant now looks like you didn’t modify gravity at all, but created an additional contribution to the pressure and density of the original fluid. In fact, considering the correction terms on their own it is clear that the cosmological constant acts exactly like an additional perfect fluid contribution with .

This is just one simple example wherein a modification of the gravitational part of the theory can be made to look like the appearance of a peculiar form of matter. More complicated versions of this idea – most of them entirely speculative – abound in theoretical cosmology. That’s just what cosmologists are like.

Over the last few decades cosmology has suffered an invasion by been stimulated and enriched by particle physicists who would like to understand how such a mysterious form of energy might arise in their theories. That at least partly explains why, in one sense at least, modern cosmologists prefer to dress to the right.

Incidentally, another interesting point is why people say such a fluid describes a cosmological “vacuum” energy. In the cosmological setting, i.e. assuming the fluid is distributed in a homogeneous and isotropic fashion then the energy density of the expanding Universe varies with (cosmological proper) time according to

so for our strange fluid, the second term in brackets vanishes and we have . As the universe expands, normal forms of matter and radiation get diluted, but the energy density of this stuff remains constant. It seems to me to be quite appropriate for a vacuum to something which, no matter how hard you try, you can’t dilute!

I hope this clarifies the situation.

Bayes’ Razor

Posted in Bad Statistics, The Universe and Stuff with tags Bayes Factor, Bayesian Model Selection, Bayesian probability, Cosmology, Evidence, Ockham's Razor, Physics, Science, Theory of Everything, William of Ockham on February 19, 2011 by telescoperIt’s been quite while since I posted a little piece about Bayesian probability. That one and the others that followed it (here and here) proved to be surprisingly popular so I’ve been planning to add a few more posts whenever I could find the time. Today I find myself in the office after spending the morning helping out with a very busy UCAS visit day, and it’s raining, so I thought I’d take the opportunity to write something before going home. I think I’ll do a short introduction to a topic I want to do a more technical treatment of in due course.

A particularly important feature of Bayesian reasoning is that it gives precise motivation to things that we are generally taught as rules of thumb. The most important of these is Ockham’s Razor. This famous principle of intellectual economy is variously presented in Latin as Pluralites non est ponenda sine necessitate or Entia non sunt multiplicanda praetor necessitatem. Either way, it means basically the same thing: the simplest theory which fits the data should be preferred.

William of Ockham, to whom this dictum is attributed, was an English Scholastic philosopher (probably) born at Ockham in Surrey in 1280. He joined the Franciscan order around 1300 and ended up studying theology in Oxford. He seems to have been an outspoken character, and was in fact summoned to Avignon in 1323 to account for his alleged heresies in front of the Pope, and was subsequently confined to a monastery from 1324 to 1328. He died in 1349.

In the framework of Bayesian inductive inference, it is possible to give precise reasons for adopting Ockham’s razor. To take a simple example, suppose we want to fit a curve to some data. In the presence of noise (or experimental error) which is inevitable, there is bound to be some sort of trade-off between goodness-of-fit and simplicity. If there is a lot of noise then a simple model is better: there is no point in trying to reproduce every bump and wiggle in the data with a new parameter or physical law because such features are likely to be features of the noise rather than the signal. On the other hand if there is very little noise, every feature in the data is real and your theory fails if it can’t explain it.

To go a bit further it is helpful to consider what happens when we generalize one theory by adding to it some extra parameters. Suppose we begin with a very simple theory, just involving one parameter , but we fear it may not fit the data. We therefore add a couple more parameters, say

and

. These might be the coefficients of a polynomial fit, for example: the first model might be straight line (with fixed intercept), the second a cubic. We don’t know the appropriate numerical values for the parameters at the outset, so we must infer them by comparison with the available data.

Quantities such as ,

and

are usually called “floating” parameters; there are as many as a dozen of these in the standard Big Bang model, for example.

Obviously, having three degrees of freedom with which to describe the data should enable one to get a closer fit than is possible with just one. The greater flexibility within the general theory can be exploited to match the measurements more closely than the original. In other words, such a model can improve the likelihood, i.e. the probability of the obtained data arising (given the noise statistics – presumed known) if the signal is described by whatever model we have in mind.

But Bayes’ theorem tells us that there is a price to be paid for this flexibility, in that each new parameter has to have a prior probability assigned to it. This probability will generally be smeared out over a range of values where the experimental results (contained in the likelihood) subsequently show that the parameters don’t lie. Even if the extra parameters allow a better fit to the data, this dilution of the prior probability may result in the posterior probability being lower for the generalized theory than the simple one. The more parameters are involved, the bigger the space of prior possibilities for their values, and the harder it is for the improved likelihood to win out. Arbitrarily complicated theories are simply improbable. The best theory is the most probable one, i.e. the one for which the product of likelihood and prior is largest.

To give a more quantitative illustration of this consider a given model which has a set of

floating parameters represented as a vector

; in a sense each choice of parameters represents a different model or, more precisely, a member of the family of models labelled

.

Now assume we have some data and can consequently form a likelihood function

. In Bayesian reasoning we have to assign a prior probability

to the parameters of the model which, if we’re being honest, we should do in advance of making any measurements!

The interesting thing to look at now is not the best-fitting choice of model parameters but the extent to which the data support the model in general. This is encoded in a sort of average of likelihood over the prior probability space:

This is just the normalizing constant usually found in statements of Bayes’ theorem which, in this context, takes the form

In statistical mechanics things like are usually called partition functions, but in this setting

is called the evidence, and it is used to form the so-called Bayes Factor, used in a technique known as Bayesian model selection of which more anon….

The usefulness of the Bayesian evidence emerges when we ask the question whether our parameters are sufficient to get a reasonable fit to the data. Should we add another one to improve things a bit further? And why not another one after that? When should we stop?

The answer is that although adding an extra degree of freedom can increase the first term in the integral defining (the likelihood), it also imposes a penalty in the second factor, the prior, because the more parameters the more smeared out the prior probability must be. If the improvement in fit is marginal and/or the data are noisy, then the second factor wins and the evidence for a model with

parameters lower than that for the

-parameter version. Ockham’s razor has done its job.

This is a satisfying result that is in nice accord with common sense. But I think it goes much further than that. Many modern-day physicists are obsessed with the idea of a “Theory of Everything” (or TOE). Such a theory would entail the unification of all physical theories – all laws of Nature, if you like – into a single principle. An equally accurate description would then be available, in a single formula, of phenomena that are currently described by distinct theories with separate sets of parameters. Instead of textbooks on mechanics, quantum theory, gravity, electromagnetism, and so on, physics students would need just one book.

The physicist Stephen Hawking has described the quest for a TOE as like trying to read the Mind of God. I think that is silly. If a TOE is every constructed it will be the most economical available description of the Universe. Not the Mind of God. Just the best way we have of saving paper.

MSSL & CSS

Posted in Biographical, The Universe and Stuff with tags Cardiff Scientific Society, Jet streams, MSSL, Mullard Space Science Laboratory on February 17, 2011 by telescoperI was up early yet again this morning to catch a train to Guildford. From there I was whisked off by a taxi into the Surrey countryside to visit MSSL, or the Minimal Supersymmetric Standard Mullard Space Science Laboratory, which is an outpost of University College London. No sooner had I got there and I was whisked off again to a very nice local country pub for lunch and a pint, before being returned, suitably inebriated, to give my seminar.

I’ve never been to MSSL before – nor Guildford, for that matter – and my day out was a very pleasant surprise. Not only were there no disasters on the trains, despite having to travel via Reading, but the fine springlike weather gave me good views of the green and pleasant land that is Surrey. MSSL is itself on the top of a hill, and on a clear day you can see as far as the Sussex downs to the South. But not quite today as it was a little misty.

I had to leave not long after my talk finished in order to get back to Guildford, a drive of about 40 minutes. I got there with about 15 minutes to spare, but it turned out that the train before the one I was intending to catch was about 15 minutes late so I got straight on it. I thus got to Reading two minutes ahead of the train before the one I was planning to catch there, so in the end got home about half an hour early. Which was nice.

I enjoyed the visit there enormously. Everyone was very friendly. Apparently, some of them even read this blog so I’d like to say thanks for the invitation and for struggling manfully to stay awake as I droned on after the pub lunch.

I didn’t get much time to post yesterday either, because I had to attend a function organised by Cardiff Scientific Society (of which I am a Committee Member). This was the occasion of the annual Lord Phillips Memorial Lecture, given this year by Professor Sir Brian Hoskins on the subject of Jet Streams in Weather and Climate. Jet streams are fascinating but highly complex phenomena and it’s clear that there’s a lot about them meteorologists don’t understand fully. One thing I did learn during the lecture, however, was that when people say that changes in the Atlantic jet stream “cause” unusual weather (such as our recent cold spell, or the floods of 2007), they’re wrong. It seems clear that the jet stream is part of the atmospheric pattern that gives rise to such events but can’t be said to be responsible for them.

Anyway, after a fascinating lecture we adjourned with the speaker to the Vice-Chancellor’s dining room, for a (fairly) late supper. One of the perks of the job, I guess. I wasn’t too late getting home, and got to bed early enough to make getting up at 6am not too stressful.

With another busy day tomorrow, and a UCAS event on Saturday that I (unwisely) volunteered to help with, I think I’m going to get an early night tonight.

Toodle-pip!