I’m very pressed for time this week so I thought I’d cheat by resurrecting and updating an old post from way back when I had just started blogging, about three years ago. I thought of doing this because I just came across a Youtube clip of the late great Alfred Hitchcock, which you’ll now find in the post. I’ve also made a couple of minor editorial changes, but basically it’s a recycled piece and you should therefore read it for environmental reasons.

–0–

Unpick the plot of any thriller or suspense movie and the chances are that somewhere within it you will find lurking at least one MacGuffin. This might be a tangible thing, such the eponymous sculpture of a Falcon in the archetypal noir classic The Maltese Falcon or it may be rather nebulous, like the “top secret plans” in Hitchcock’s The Thirty Nine Steps. Its true character may be never fully revealed, such as in the case of the glowing contents of the briefcase in Pulp Fiction , which is a classic example of the “undisclosed object” type of MacGuffin. Or it may be scarily obvious, like a doomsday machine or some other “Big Dumb Object” you might find in a science fiction thriller. It may even not be a real thing at all. It could be an event or an idea or even something that doesn’t exist in any real sense at all, such the fictitious decoy character George Kaplan in North by Northwest.

Whatever it is or is not, the MacGuffin is responsible for kick-starting the plot. It makes the characters embark upon the course of action they take as the tale begins to unfold. This plot device was particularly beloved by Alfred Hitchcock (who was responsible for introducing the word to the film industry). Hitchcock was however always at pains to ensure that the MacGuffin never played as an important a role in the mind of the audience as it did for the protagonists. As the plot twists and turns – as it usually does in such films – and its own momentum carries the story forward, the importance of the MacGuffin tends to fade, and by the end we have often forgotten all about it. Hitchcock’s movies rarely bother to explain their MacGuffin(s) in much detail and they often confuse the issue even further by mixing genuine MacGuffins with mere red herrings.

Here is the man himself explaining the concept at the beginning of this clip. (The rest of the interview is also enjoyable, convering such diverse topics as laxatives, ravens and nudity..)

North by North West is a fine example of a multi-MacGuffin movie. The centre of its convoluted plot involves espionage and the smuggling of what is only cursorily described as “government secrets”. But although this is behind the whole story, it is the emerging romance, accidental betrayal and frantic rescue involving the lead characters played by Cary Grant and Eve Marie Saint that really engages the characters and the audience as the film gathers pace. The MacGuffin is a trigger, but it soon fades into the background as other factors take over.

There’s nothing particular new about the idea of a MacGuffin. I suppose the ultimate example is the Holy Grail in the tales of King Arthur and the Knights of the Round Table and, much more recently, the Da Vinci Code. The original Grail itself is basically a peg on which to hang a series of otherwise disconnected stories. It is barely mentioned once each individual story has started and, of course, is never found.

Physicists are fond of describing things as “The Holy Grail” of their subject, such as the Higgs Boson or gravitational waves. This always seemed to me to be an unfortunate description, as the Grail quest consumed a huge amount of resources in a predictably fruitless hunt for something whose significance could be seen to be dubious at the outset.The MacGuffin Effect nevertheless continues to reveal itself in science, although in different forms to those found in Hollywood.

The Large Hadron Collider (LHC), switched on to the accompaniment of great fanfares a few years ago, provides a nice example of how the MacGuffin actually works pretty much backwards in the world of Big Science. To the public, the LHC was built to detect the Higgs Boson, a hypothetical beastie introduced to account for the masses of other particles. If it exists the high-energy collisions engineered by LHC should reveal its presence. The Higgs Boson is thus the LHC’s own MacGuffin. Or at least it would be if it were really the reason why LHC has been built. In fact there are dozens of experiments at CERN and many of them have very different motivations from the quest for the Higgs, such as evidence for supersymmetry.

Particle physicists are not daft, however, and they have realised that the public and, perhaps more importantly, government funding agencies need to have a really big hook to hang such a big bag of money on. Hence the emergence of the Higgs as a sort of master MacGuffin, concocted specifically for public consumption, which is much more effective politically than the plethora of mini-MacGuffins which, to be honest, would be a fairer description of the real state of affairs.

Even this MacGuffin has its problems, though. The Higgs mechanism is notoriously difficult to explain to the public, so some have resorted to a less specific but more misleading version: “The Big Bang”. As I’ve already griped, the LHC will never generate energies anything like the Big Bang did, so I don’t have any time for the language of the “Big Bang Machine”, even as a MacGuffin.

While particle physicists might pretend to be doing cosmology, we astrophysicists have to contend with MacGuffins of our own. One of the most important discoveries we have made about the Universe in the last decade is that its expansion seems to be accelerating. Since gravity usually tugs on things and makes them slow down, the only explanation that we’ve thought of for this perverse situation is that there is something out there in empty space that pushes rather than pulls. This has various possible names, but Dark Energy is probably the most popular, adding an appropriately noirish edge to this particular MacGuffin. It has even taken over in prominence from its much older relative, Dark Matter, although that one is still very much around.

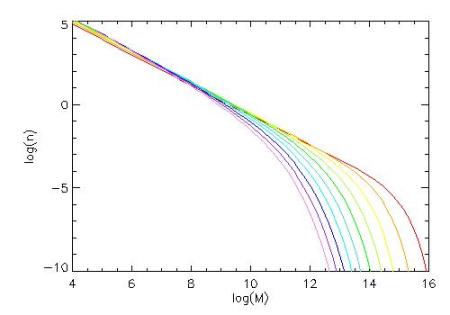

We have very little idea what Dark Energy is, where it comes from, or how it relates to other forms of energy we are more familiar with, so observational astronomers have jumped in with various grandiose strategies to find out more about it. This has spawned a booming industry in surveys of the distant Universe (such as the Dark Energy Survey) all aimed ostensibly at unravelling the mystery of the Dark Energy. It seems that to get any funding at all for cosmology these days you have to sprinkle the phrase “Dark Energy” liberally throughout your grant applications.

The old-fashioned “observational” way of doing astronomy – by looking at things hard enough until something exciting appears (which it does with surprising regularity) – has been replaced by a more “experimental” approach, more like that of the LHC. We can no longer do deep surveys of galaxies to find out what’s out there. We have to do it “to constrain models of Dark Energy”. This is just one example of the not necessarily positive influence that particle physics has had on astronomy in recent times and it has been criticised very forcefully by Simon White.

Whatever the motivation for doing these projects now, they will undoubtedly lead to new discoveries. But my own view is that there will never be a solution of the Dark Energy problem until it is understood much better at a conceptual level, and that will probably mean major revisions of our theories of both gravity and matter. I venture to speculate that in twenty years or so people will look back on the obsession with Dark Energy with some amusement, as our theoretical language will have moved on sufficiently to make it seem irrelevant.

But that’s how it goes with MacGuffins. Even the Maltese Falcon turned out to be a fake in the end.

Follow @telescoper